Artificial intelligence has reshaped the financial sector, processing millions of transactions, assessing creditworthiness in seconds, and improving fraud detection rates. Yet beneath these operational gains lies a troubling reality: AI systems can perpetuate and even amplify the very biases we hoped technology would eliminate.

The Promise and Peril of AI in Financial Services

AI in financial services delivers significant operational benefits including efficiency gains and improved fraud detection, but introduces ethical risks because these systems are trained on historical data reflecting decades of discriminatory practices. A McKinsey Global Institute report found that AI adoption in financial services could generate up to $1 trillion in additional value annually, but warned that bias risks must be actively managed (McKinsey, 2023). When AI learns from biased lending histories and systemic inequality, it can systematize prejudice behind a veneer of mathematical objectivity, creating outcomes that are discriminatory but difficult to challenge.

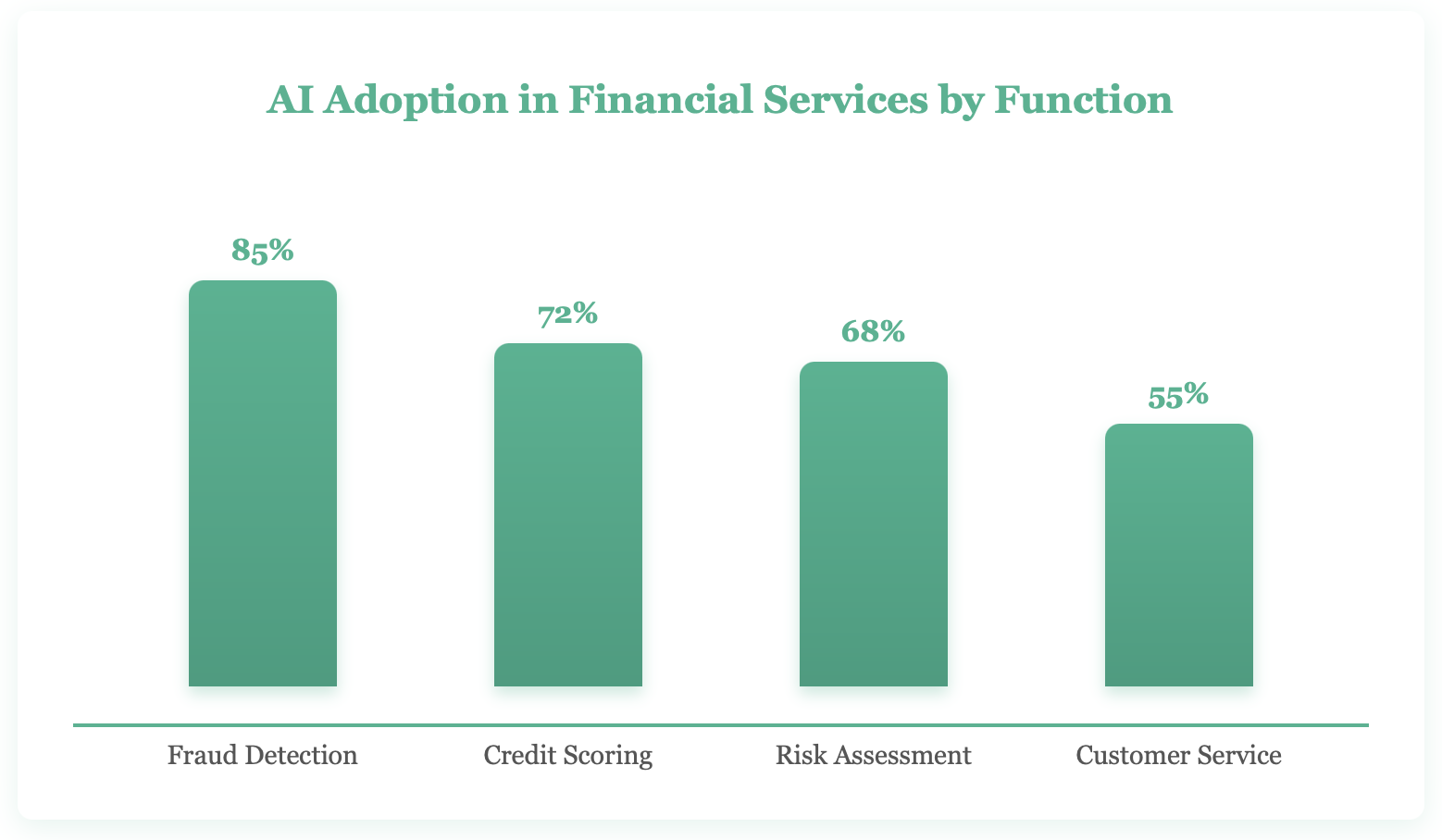

Financial institutions have eagerly adopted AI for everything from algorithmic trading to customer service chatbots. The appeal is obvious: AI can analyze vast datasets, identify patterns invisible to human analysts, and operate 24/7 without fatigue. According to Deloitte's AI in Banking survey, 86% of financial services AI adopters reported measurable returns on their AI investments, with fraud detection and operational efficiency as the top benefit areas (Deloitte, 2024).

But here's where things get complicated. These same systems that promise objectivity are trained on historical data—data that reflects decades of human bias, discriminatory lending practices, and systemic inequality. When an AI learns from this tainted history, it doesn't just replicate past decisions; it systematizes them, giving prejudice the veneer of mathematical objectivity.

The Bias Problem: When Algorithms Discriminate

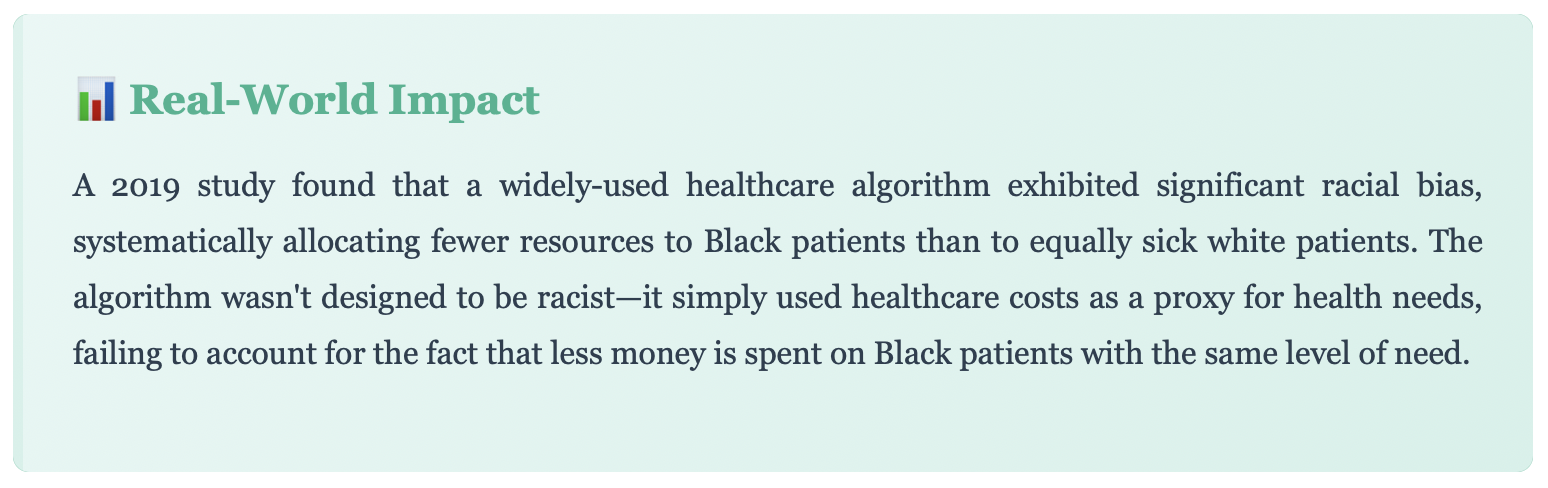

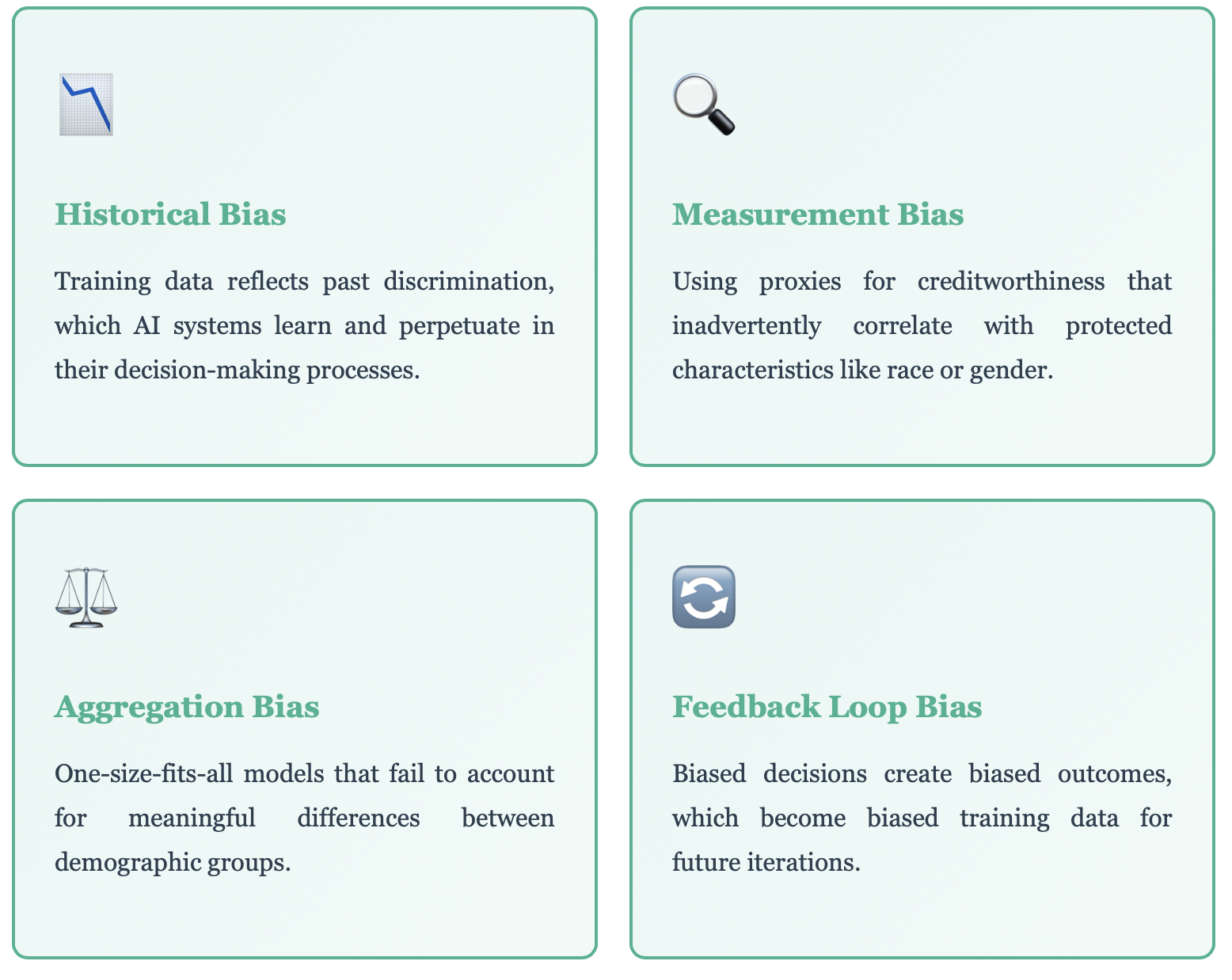

AI bias in finance most commonly manifests as proxy discrimination, where algorithms use seemingly neutral variables like zip codes or employment patterns that correlate strongly with protected characteristics such as race or ethnicity. A Brookings Institution study found that algorithmic lending decisions resulted in Black and Hispanic borrowers paying 5-9 basis points more in interest than white borrowers with equivalent credit profiles (Brookings, 2022). This digital redlining is harder to detect and challenge than explicit discrimination because the system never directly considers protected categories, yet produces disparate outcomes by optimizing on patterns embedded in historically biased data. Former SEC Chair Gary Gensler warned that "when it comes to AI and finance, the potential for bias at scale is a risk that regulators and firms must take seriously" (SEC, 2023).

Consider a real-world scenario: an AI credit scoring system consistently denies loans to applicants from certain zip codes. The algorithm doesn't explicitly consider race or ethnicity—it's been carefully designed to avoid protected characteristics. Yet the outcome is discriminatory because those zip codes correlate strongly with minority communities.

This is what experts call "proxy discrimination," and it's insidiously difficult to detect and prevent. The AI isn't being overtly racist; it's simply optimizing for patterns in historical data where systemic discrimination already existed. The result? A digital redlining that's harder to challenge precisely because it's cloaked in algorithmic neutrality.

Types of AI Bias in Finance

The Transparency Challenge: Black Boxes and Accountability

Many AI systems in finance, particularly deep neural networks, operate as black boxes where even their creators cannot fully explain specific decisions. This creates legal and ethical problems because consumers have a right to know why credit applications are denied under the Equal Credit Opportunity Act and the Fair Credit Reporting Act, yet algorithms considering thousands of variables defy human interpretation. Regulators including the EU through its AI Act are now requiring explainability for high-risk financial AI systems.

A separate concern is the opacity of many AI systems. Modern machine learning models, particularly deep neural networks, often function as "black boxes"—even their creators can't fully explain how they arrive at specific decisions. This lack of transparency creates a cascade of ethical and practical problems.

When a bank denies your loan application, you have a legal right to know why. But what happens when the decision was made by an algorithm that considered 5,000 variables in ways that defy human interpretation? The bank might tell you your "risk score was too low," but that's hardly a meaningful explanation when neither you nor the loan officer understands how that score was calculated.

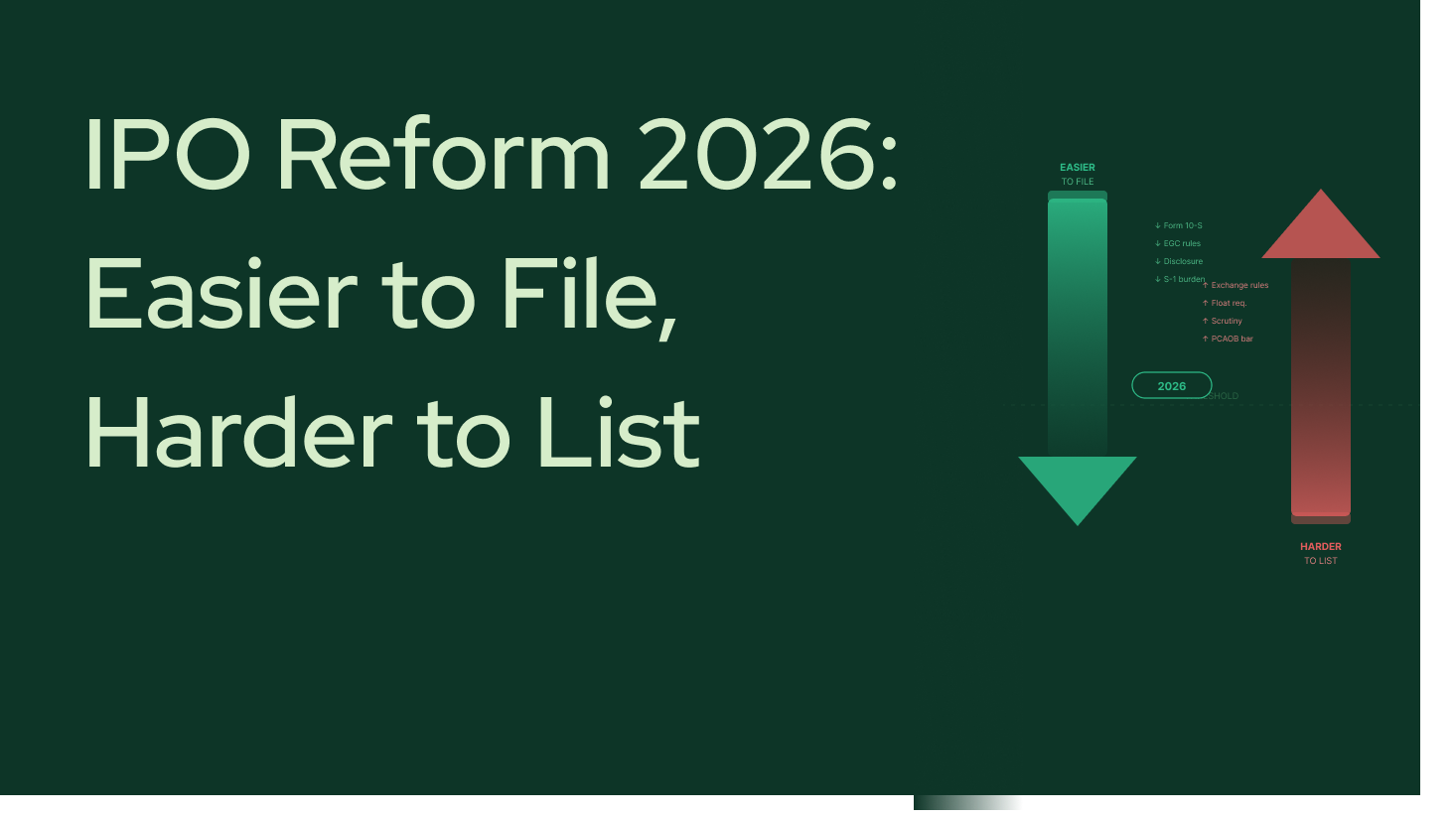

Regulatory Requirements and Disclosure Mandates

Regulators worldwide are grappling with how to ensure AI transparency in finance. The European Union's AI Act classifies many financial AI systems as "high-risk," requiring extensive documentation, human oversight, and explainability. In the United States, fair lending laws require that credit decisions be explainable to consumers, though enforcement in the age of AI remains inconsistent.

But compliance isn't just about following regulations—it's about building trust. Financial institutions that can't explain their AI decisions face reputational risks, legal challenges, and erosion of customer confidence. In a sector built on trust, opacity is a liability.

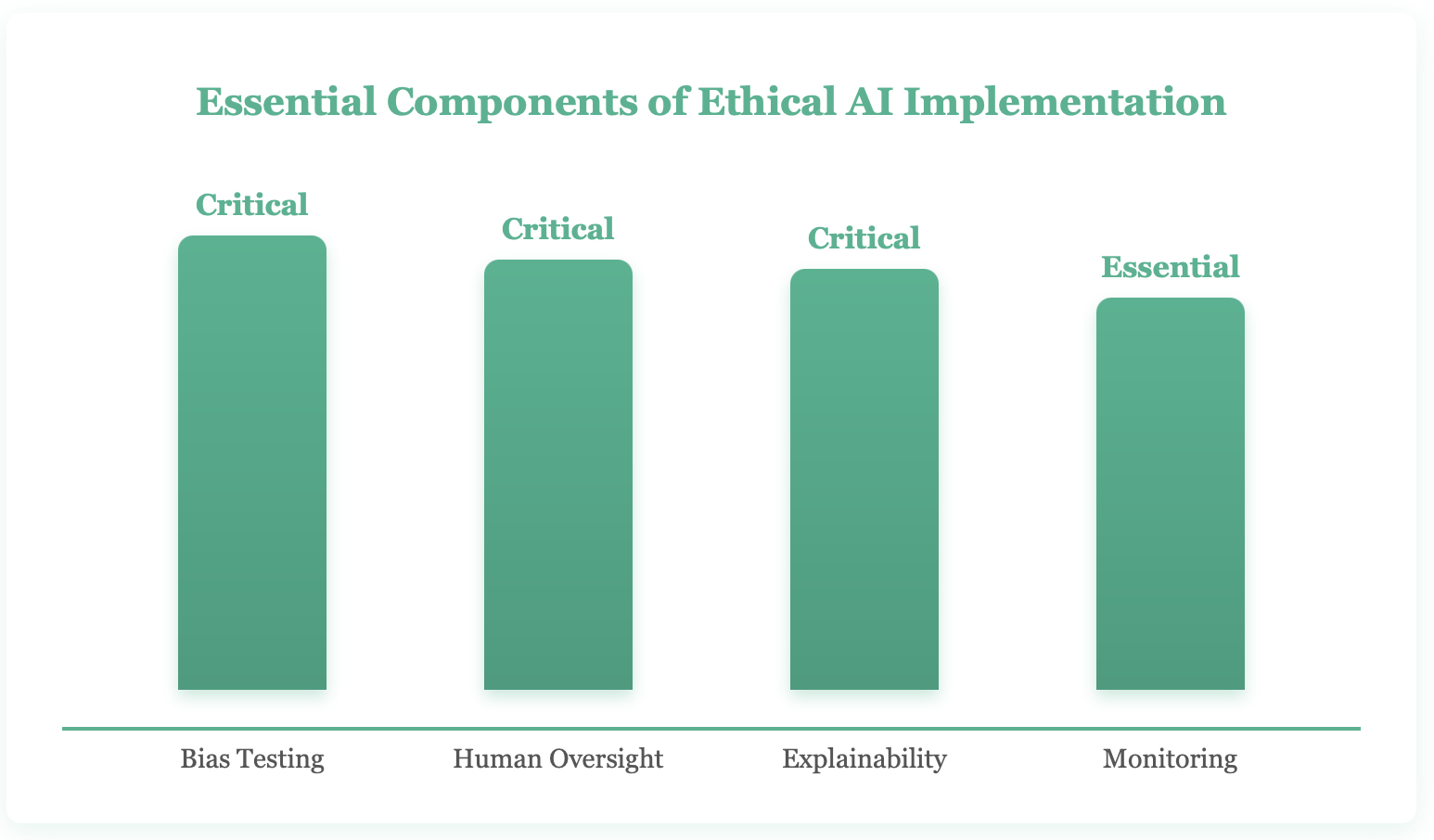

Building Ethical AI: Principles and Practices

Building ethical AI in finance requires five core principles: fairness by design using diverse training data and disparate impact testing, explainable AI through techniques like LIME and SHAP, human-in-the-loop oversight for high-stakes decisions, continuous monitoring and bias auditing as models evolve, and diverse development teams whose varied perspectives help identify potential biases before deployment. The National Institute of Standards and Technology (NIST) released its AI Risk Management Framework in 2023, providing practical guidance for organizations to identify and mitigate AI bias (NIST, 2023).

So how do we harness AI's potential while mitigating its risks? The answer requires a multifaceted approach that combines technical solutions, governance frameworks, and cultural change within financial institutions.

Key Principles for Ethical AI in Finance

- Fairness by Design: Building AI systems with fairness constraints from the start, not as an afterthought. This includes using diverse training data, testing for disparate impact, and implementing fairness metrics alongside accuracy metrics.

- Explainable AI: Prioritizing models that can provide clear, actionable explanations for their decisions. Techniques like LIME (Local Interpretable Model-agnostic Explanations) and SHAP (SHapley Additive exPlanations) help decode complex models.

- Human-in-the-Loop: Maintaining meaningful human oversight, especially for high-stakes decisions. AI should augment human judgment, not replace it entirely.

- Continuous Monitoring: Regularly auditing AI systems for bias and drift. Models that performed fairly on training data can develop biases over time as conditions change.

- Diverse Development Teams: Ensuring that the people building AI systems represent diverse perspectives and experiences, helping identify potential biases before deployment.

The Path Forward: Automated Disclosures with Integrity

AI-generated financial disclosures must balance regulatory compliance, accuracy, and genuine comprehensibility rather than simply checking compliance boxes. PCAOB Chair Erica Williams has emphasized that "as technology increasingly shapes financial reporting, we must ensure that transparency and accountability remain at the core of our capital markets" (PCAOB, 2023). Responsibility for ethical AI in finance is shared across financial institutions conducting bias audits, regulators defining practical explainability standards, technology providers building fairness into products, and consumer advocacy groups demanding accountability when AI systems produce discriminatory outcomes.

Automated disclosures represent a particularly interesting intersection of AI capability and ethical responsibility. AI systems now generate financial disclosures, risk assessments, and investment recommendations at scale. These automated communications must balance regulatory compliance, comprehensibility, and accuracy—all while being generated by algorithms.

The challenge is ensuring these disclosures don't simply check compliance boxes but actually inform consumers meaningfully. An AI that generates technically accurate but incomprehensible disclosures serves neither the institution's nor the consumer's interests.

Stakeholder Responsibilities

Creating ethical AI in finance isn't the responsibility of any single group—it requires coordinated action across the ecosystem:

**Financial Institutions **must invest in ethical AI infrastructure, conduct regular bias audits, and foster cultures that prioritize fairness alongside profitability. This means sometimes accepting slightly lower short-term performance for better long-term outcomes and reduced risk.

Regulators need to update frameworks for an AI-driven world, providing clear guidance while avoiding stifling innovation. This includes defining what "explainable" means in practice and establishing standards for bias testing.

Technology Providers should build fairness and transparency into their products from the ground up, not as optional add-ons. They must also help clients understand their systems' limitations.

Consumers and Advocacy Groups must remain vigilant, demanding accountability when AI systems produce discriminatory outcomes and supporting regulations that protect vulnerable populations.