Imagine you ask your bank's AI assistant about the interest rate on a savings account. It tells you 4.75%. You move $50,000 based on that figure. Later, you discover the actual rate was 2.1% and the AI hallucinated the rest. Who do you call? Who do you sue?

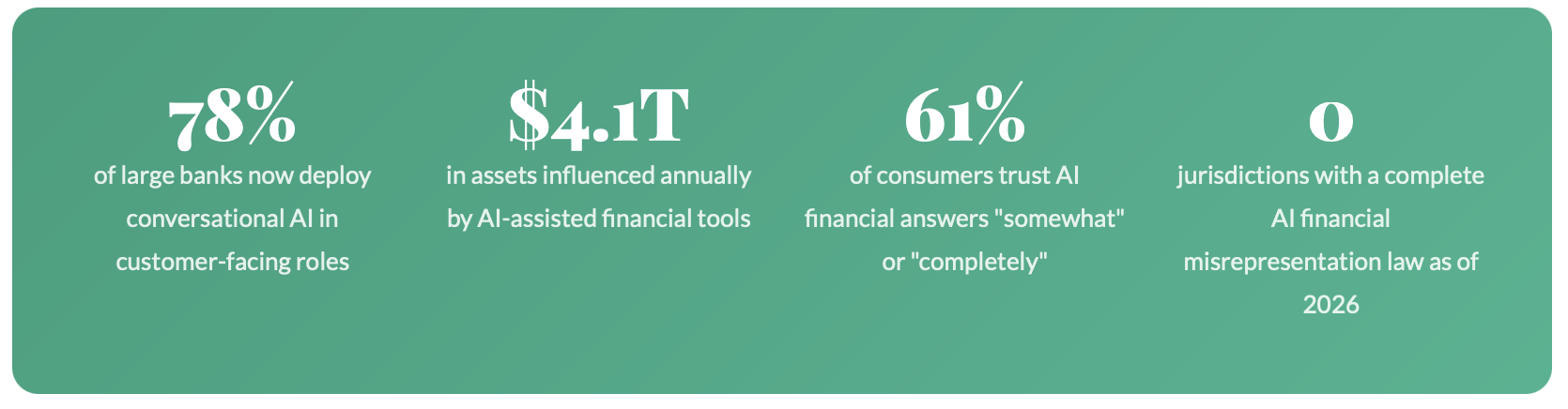

This isn't a hypothetical engineered for a law school exam. It is a scenario playing out quietly, incrementally across fintech platforms, robo advisors, insurance portals, and wealth management apps worldwide. AI agents are no longer just answering FAQs. They are quoting rates, explaining policy terms, describing fund performance, and in some jurisdictions, executing trades.

The financial industry has spent decades erecting compliance infrastructure around the concept of a "regulated human" a licensed professional who can be held accountable for advice. That infrastructure is structurally unprepared for the rise of the digital employee: an AI agent that acts with authority, speaks with confidence, and has no license to revoke. Today's AI financial tools range from simple chatbots to sophisticated robo-advisors that autonomously manage client portfolios and the line between "tool" and "advisor" is blurring fast.

The Anatomy of an AI Financial Misrepresentation

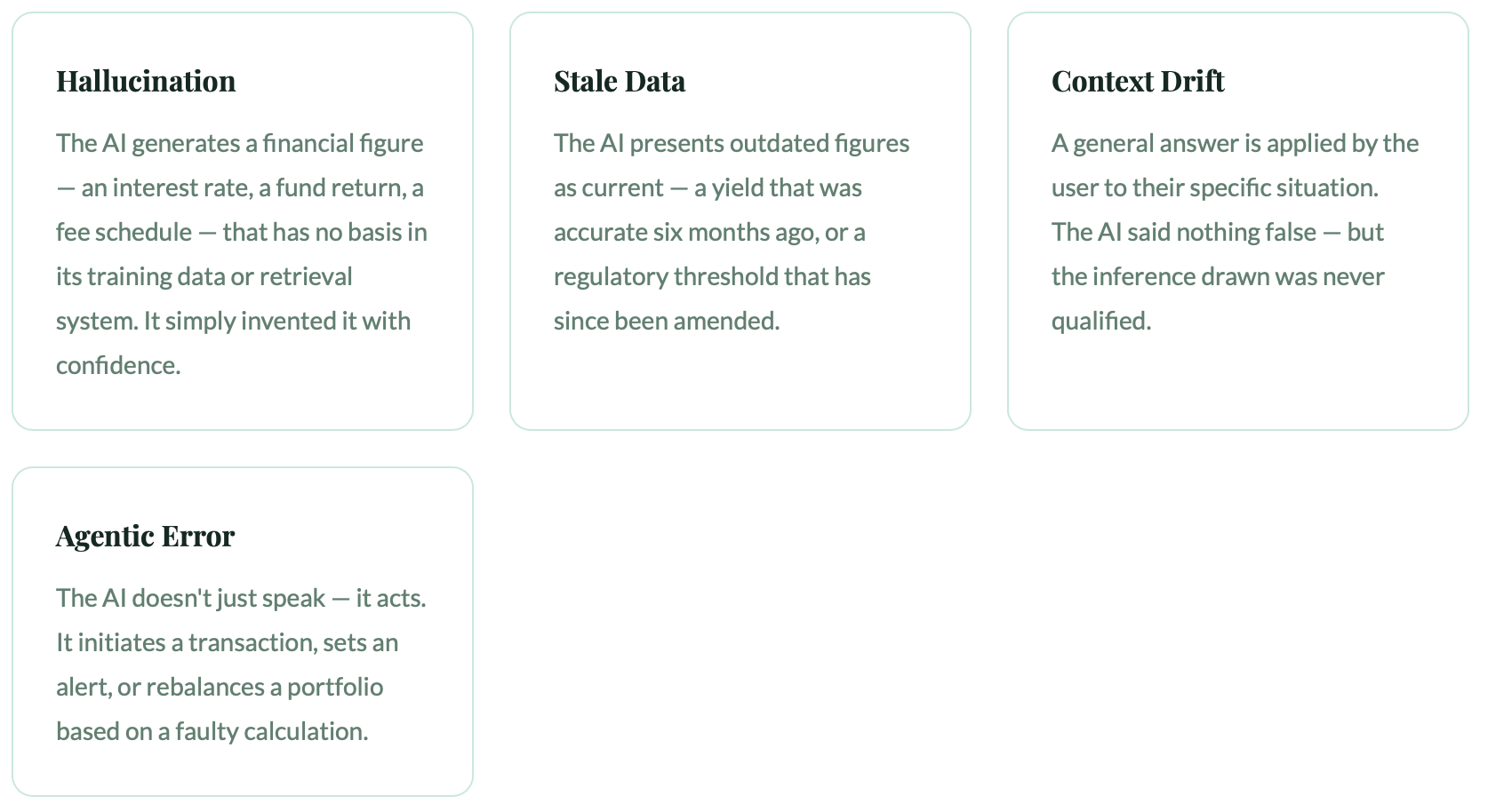

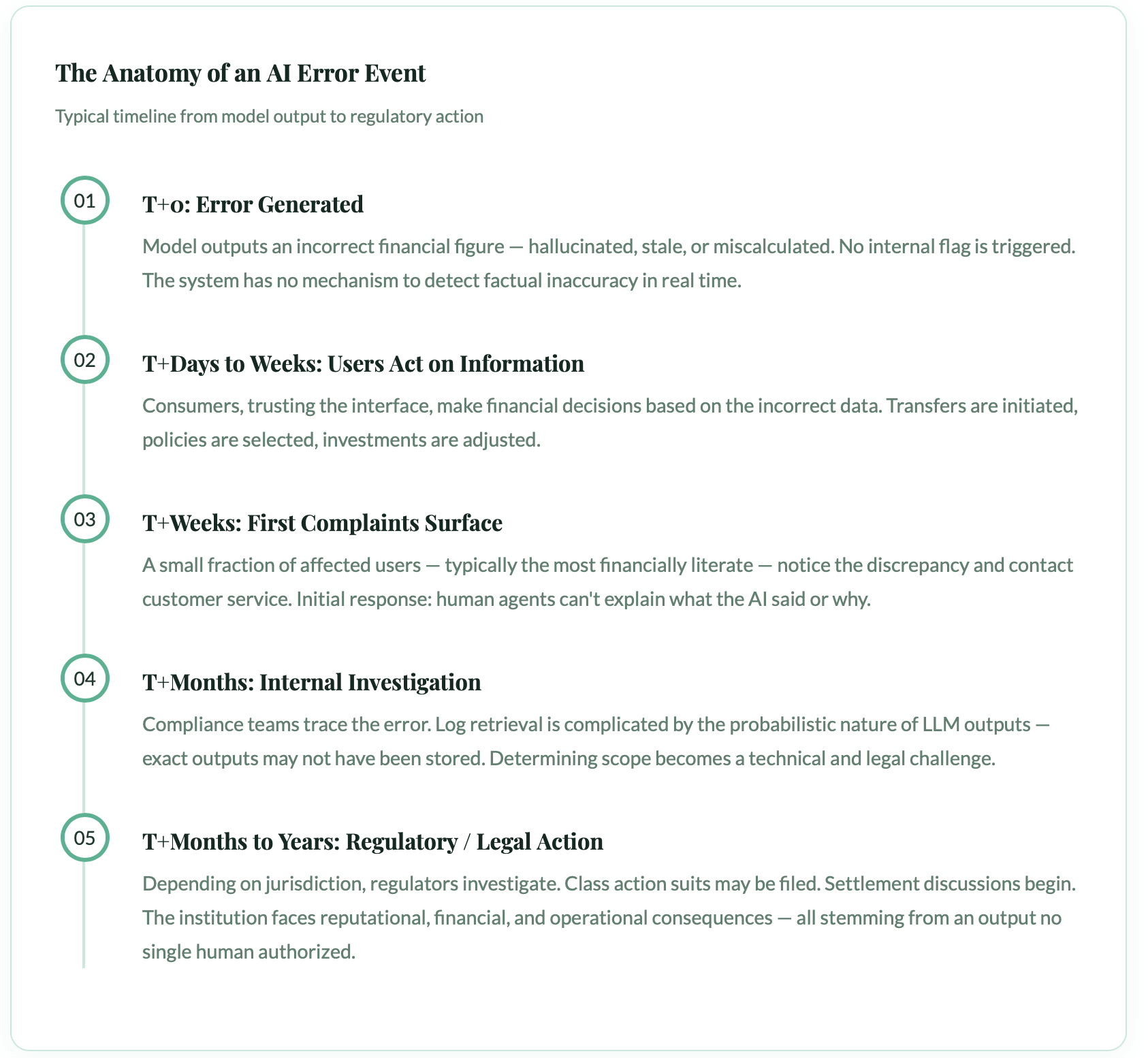

AI financial misrepresentation spans a spectrum of failure modes including hallucinations where the model generates fabricated figures, stale data errors from outdated information pipelines, context drift where accurate data is applied to the wrong product or situation, and agentic errors where autonomous AI takes actions based on flawed reasoning. Each failure mode implicates different actors in the technology and deployment chain.

AI financial misrepresentation is not a single, clean event—it is a spectrum, and different parts of the spectrum carry very different legal weight.

Each of these failure modes produces harm in different ways and implicates different actors. A hallucination points back to the model. Stale data might implicate the data pipeline. Context drift might be a failure of UI design. An agentic error cascades into product liability territory.

REAL-WORLD SCENARIO

In late 2024, a major European wealth management firm's AI assistant incorrectly described the tax treatment of a specific ETF class to over 3,200 clients. The error stemmed from a regulatory update that had been applied to live data but not yet reflected in the model's fine tuned prompt layer. Clients who acted on the advice faced unexpected tax bills averaging €1,400. The firm settled quietly. No public enforcement action was taken yet.

The Responsibility Stack: Everyone Is Involved, No One Is Clearly Liable

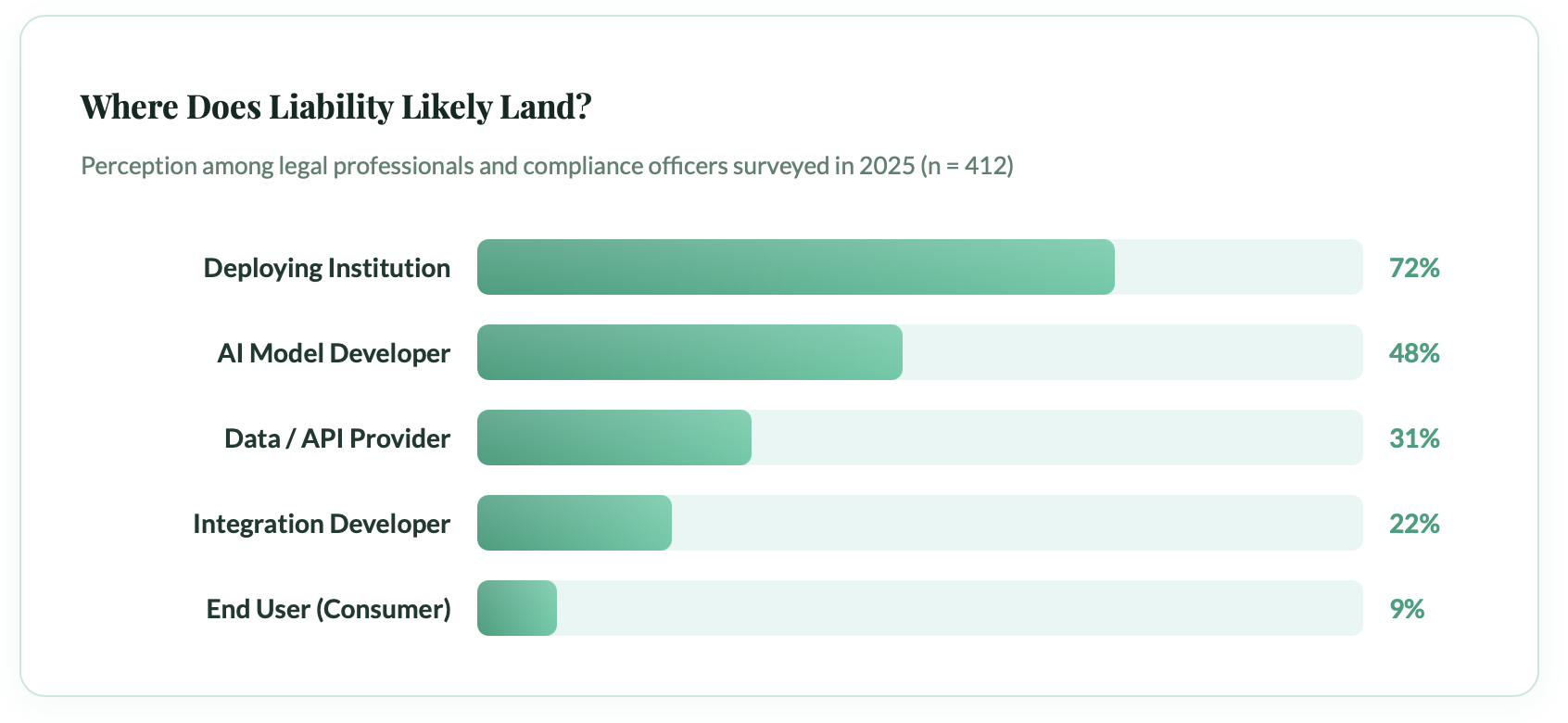

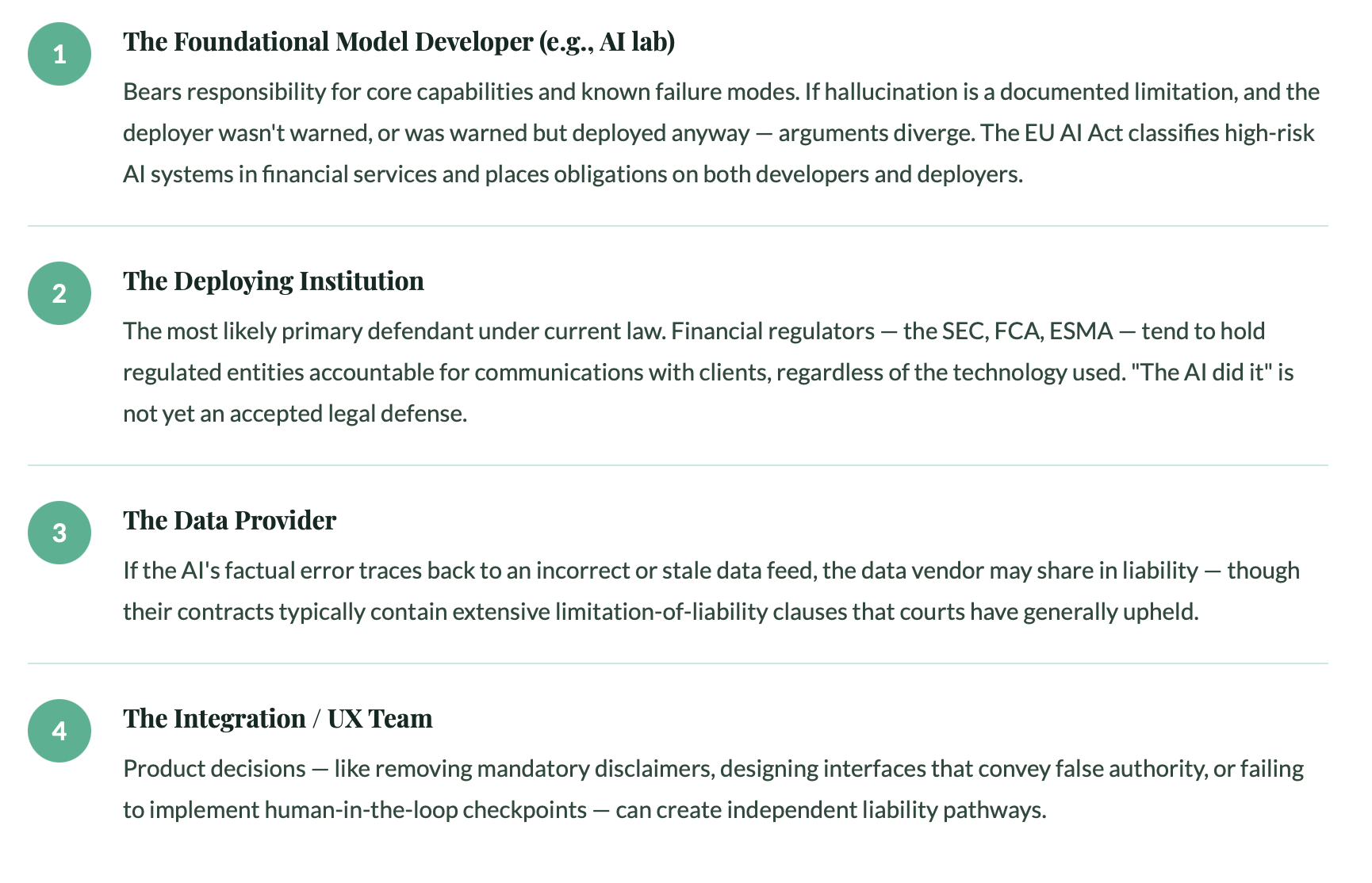

Liability for AI financial misrepresentation distributes across multiple parties: the foundation model provider, the fine-tuning or integration vendor, the deploying financial institution, and internal teams responsible for data pipelines and UI design. The deploying institution typically faces the greatest exposure because it controls the customer relationship and owns the Terms of Service, but no single party bears clearly defined legal liability under current frameworks.

When an AI agent misrepresents a financial fact, the blame doesn't land neatly in one place. Instead, it distributes across a chain of principals each with a partial role, and each with a strong incentive to point at someone else.

The deploying institution the bank, the fintech, the insurer faces the most exposure because it controls the user relationship and typically owns the Terms of Service. But "most exposed" does not mean "clearly liable." Here's how the responsibility stack actually looks in practice:

The "Ostrich Problem"

Legal scholars have noted that financial institutions sometimes deliberately avoid testing their AI systems for accuracy because identifying a flaw creates a documented duty to fix it. Regulators are beginning to treat willful ignorance of AI failure modes as its own compliance violation. This mirrors the SEC's evolving stance on technology related due diligence obligations.

The Regulatory Patchwork: A Map With No Legend

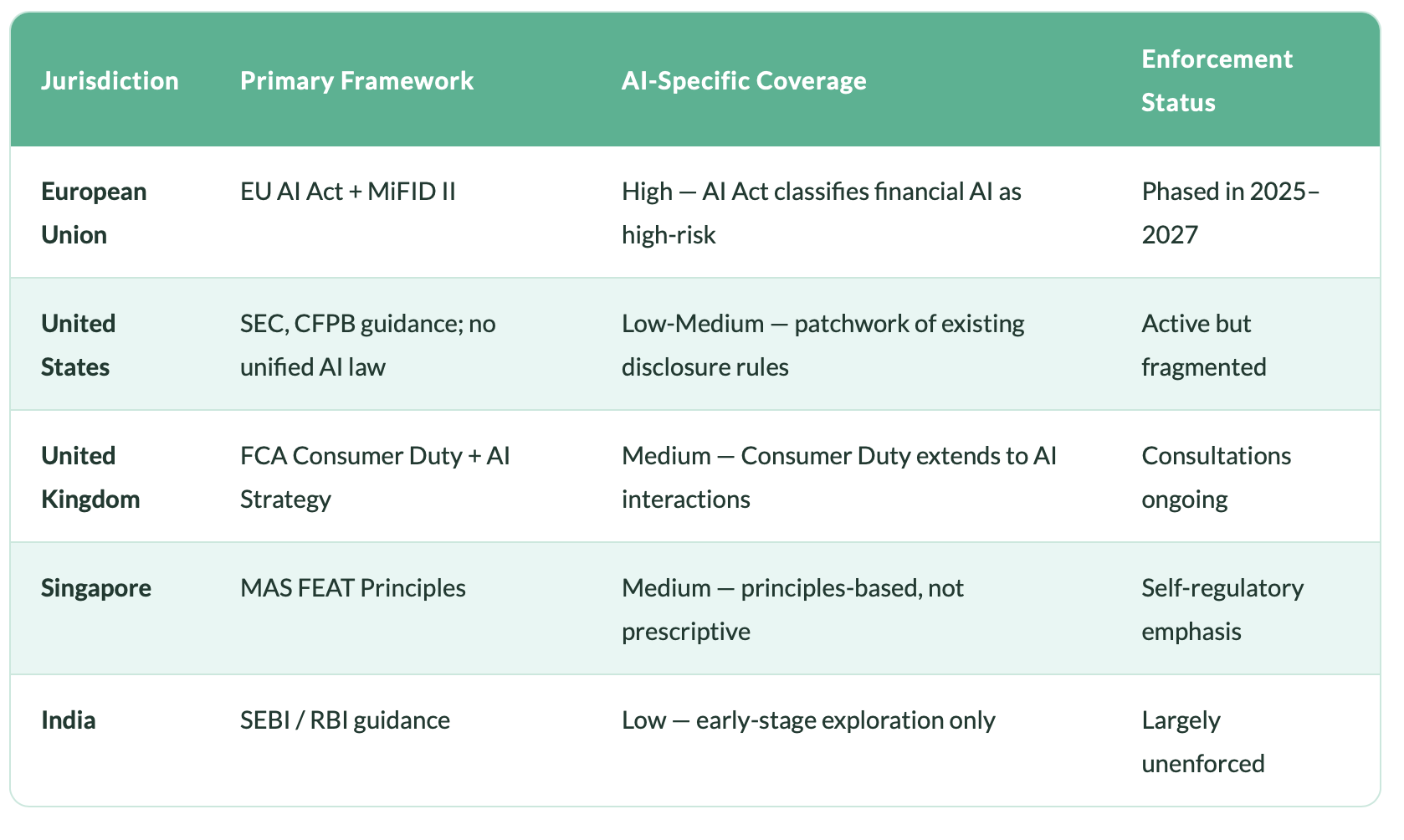

Current AI liability regulation in financial services is fragmented across jurisdictions, with the EU AI Act classifying financial AI systems as high-risk requiring conformity assessments and human oversight, the FCA applying Consumer Duty principles, and the SEC evolving its technology due diligence guidance. No existing framework definitively answers who pays when an AI agent causes financial harm to a retail client, creating significant compliance uncertainty.

If you're a compliance officer trying to navigate AI liability in financial services today, you're working with a patchwork of frameworks that were built for different problems, in different eras, by regulators who could not have anticipated a GPT class language model executing financial guidance at scale.

The EU AI Act represents the world's most comprehensive attempt to regulate AI in financial services. It places AI systems used for creditworthiness assessment, insurance pricing, and investment advice into the "high-risk" category requiring conformity assessments, human oversight mechanisms, and extensive documentation. Yet even the EU framework does not definitively answer who pays when an AI agent causes financial harm to a retail client.

Understanding how these regulatory frameworks are developing globally is essential for any institution deploying AI in client-facing roles. As the Financial Stability Board has outlined in its analysis of AI in financial markets, the systemic risks of AI misrepresentation extend well beyond individual consumer harm they carry the potential to destabilize market confidence at scale.

How AI Errors Propagate

AI financial misrepresentation is uniquely dangerous because errors propagate simultaneously to thousands of users before detection, unlike a human advisor's one-on-one mistake. A single flawed output can be delivered identically across an entire customer base, compounding financial harm at scale and creating systemic risk that extends beyond individual consumer losses to potential market confidence erosion.

One of the features that makes AI financial misrepresentation particularly dangerous is how slowly and how widely harm can propagate before anyone detects it. Unlike a human advisor who mis-states a figure in one conversation, an AI agent can deliver the same error to tens of thousands of users simultaneously.

What Does Responsible AI Deployment Actually Look Like?

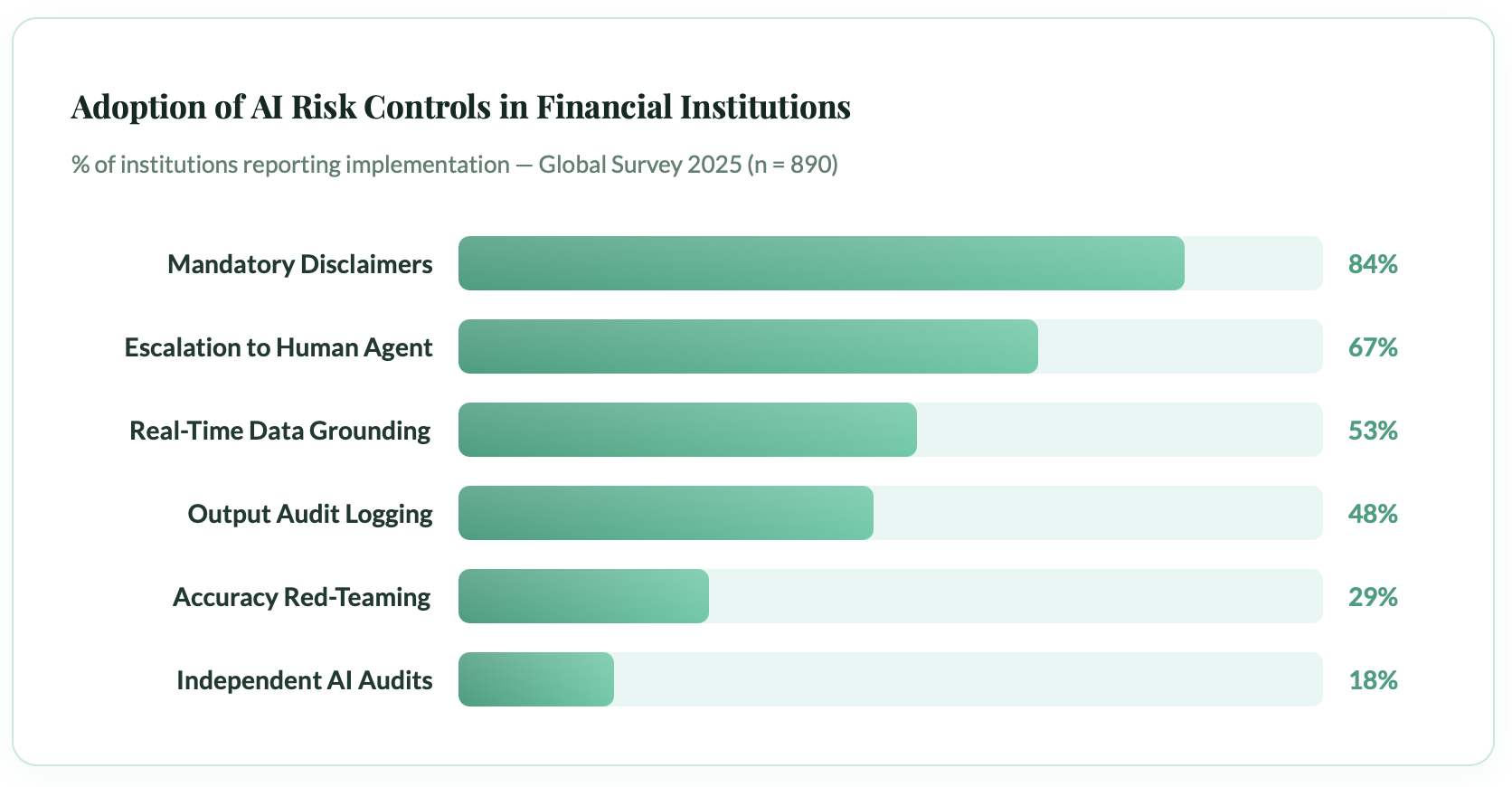

Responsible AI deployment in financial services requires corresponding accountability infrastructure including accuracy monitoring systems, red teaming programs, independent audits, clear escalation protocols, and human oversight mechanisms. Institutions most exposed to liability are those that deployed AI without these structures. Fewer than one in five financial institutions currently conduct red teaming or independent audits of their AI outputs.

The good news if there is good news here is that the liability problem is, in large part, a design and governance problem. The institutions most exposed to AI misrepresentation liability are those that have deployed AI systems without corresponding accountability infrastructure. Those that have built thoughtfully are, at minimum, better positioned to defend themselves.

The low adoption rates for red teaming and independent audits are particularly striking. These are the mechanisms most likely to catch systemic accuracy failures before they reach consumers and yet fewer than one in five institutions have implemented them.

The Governance Framework That Should Exist

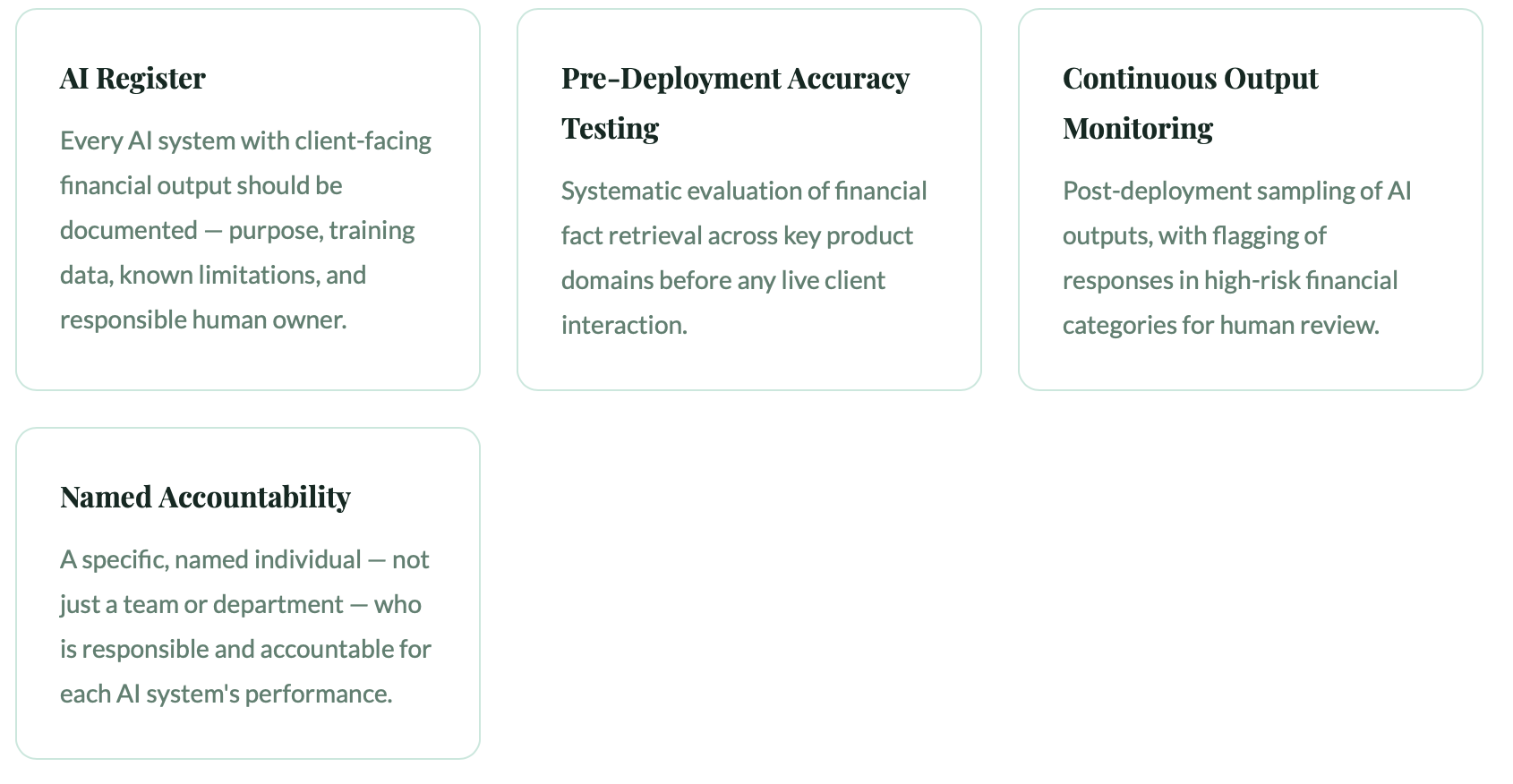

A minimum viable governance framework for AI in financial services should include pre-deployment accuracy testing, continuous output monitoring, clear human escalation pathways, transparent documentation of AI limitations, and incident response protocols. Notably, courts have been skeptical of "this is not financial advice" disclaimers when the interface is designed to deliver what functions as authoritative financial guidance.

Regulatory frameworks are converging on a set of principles for responsible AI deployment in financial services. Drawing on the EU AI Act, FCA Consumer Duty, and the SEC's evolving guidance, a minimum viable governance structure should include:

The Disclaimer Trap

Many institutions believe that adding "This is not financial advice" to AI outputs provides legal protection. Courts have been skeptical. If an interface is designed to look and feel like authoritative financial guidance, a small-print disclaimer may not override the reasonable impression created in a consumer's mind. The CFPB's spotlight report on AI chatbots in banking explicitly raised concerns about this practice, noting that functional design can override technical disclaimers in consumer understanding.

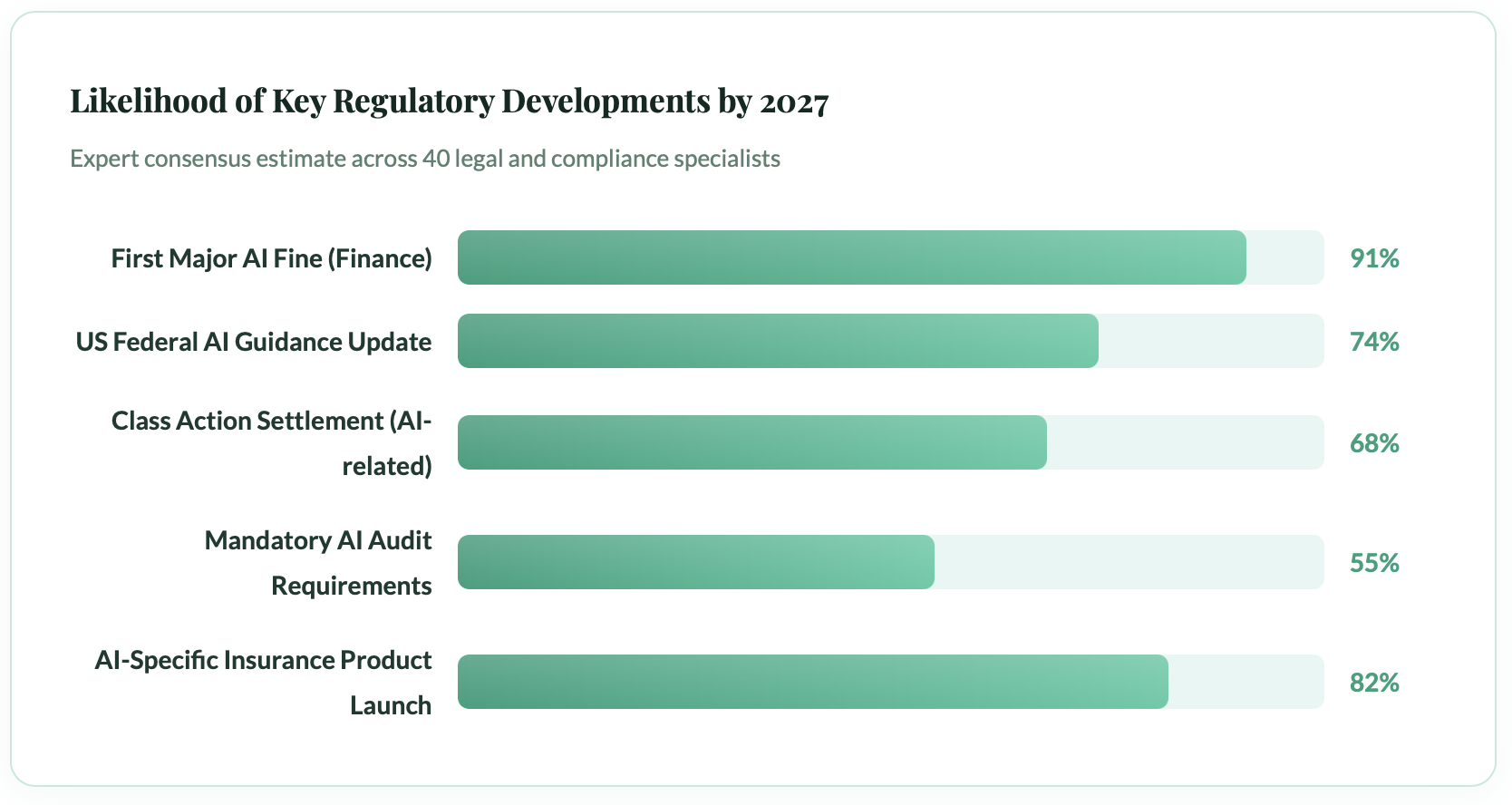

Where Is This Heading? The Legal Horizon

Within the next 24 to 36 months, the financial industry can expect a major regulatory enforcement action against an institution for AI misrepresentation, at least one significant class action settlement, the emergence of AI-specific liability insurance products, and evolving case law that clarifies institutional duty of care for AI-generated financial communications. The market has already concluded this risk is real and quantifiable.

Legal doctrine moves slowly. AI moves fast. The gap between them is where the most significant near term risks live. Here's what we can reasonably expect in the next 24–36 months:

The near-certainty of a major enforcement action and the high likelihood of a class action settlement should serve as a forcing function for institutions that have been slow to act. The question is no longer whether this will happen, but who will be first, and whether your organisation has done enough to demonstrate good faith.

Perhaps most significant is the development of AI-specific insurance products. Just as cyber insurance emerged to cover data breach liabilities, a new class of AI liability insurance is taking shape suggesting that the market, at least, has concluded that this risk is real, material, and quantifiable.

The "Digital Employee" Needs a Digital Employment Contract

Human financial advisors operate within established accountability structures including licensing, contracts, regulatory oversight, insurance, and clear chains of responsibility. AI agents performing equivalent functions currently lack these safeguards. Regulation, litigation, and market forces are converging toward explicit accountability frameworks for AI agents in financial services, and institutions that build these structures voluntarily will be better positioned than those compelled by enforcement.

The metaphor of the "digital employee" is useful precisely because it exposes the absurdity of the current situation. When you hire a financial advisor, there is a contract, a license, a regulatory framework, an insurance policy, and a clear chain of accountability. When you deploy an AI agent to do the same job, none of those structures currently exist in their full form.

That gap is not permanent. Regulation, litigation, and market forces are all pushing toward a world where AI agents in financial services carry explicit accountability frameworks. The institutions that build those frameworks now voluntarily, thoughtfully will be significantly better positioned than those who wait to be compelled.

The AI did not misrepresent the fact in a vacuum. Someone deployed it. Someone designed the interface. Someone chose not to build the oversight mechanism. That someone has a name, a company, and a regulator.