Most conversations about AI in financial analysis follow the same pattern. A vendor makes a capability claim. A CFO team asks for proof. The proof offered is a case study, a percentage reduction in time, or a reference to a large language model's benchmark score.

None of that is evidence in the sense that matters for a public company making a disclosure decision. Evidence, in the sense that matters, means peer-reviewed research, regulator-published findings, and audit-grade data that your external auditors would accept as support for a conclusion.

This post applies that standard to AI in financial analysis and reporting. Every claim is mapped to a specific source. Where the evidence supports a capability, we say so. Where it does not, we say that too. By the end, you will have a research-grounded view of where AI in financial analysis delivers measurable value, where it introduces risk that existing tools do not, and what the specific architectural difference is between AI tools that are appropriate for SEC disclosure work and those that are not.

What the Evidence Actually Shows About AI Adoption in Finance

Before examining what works and what does not, the scale of adoption needs to be established with precision. The numbers are meaningful, and so is what they do not tell you.

According to KPMG's December 2024 global survey of 2,900 finance executives across 23 countries, 62% of US companies are using AI to a moderate or large degree, 58% are piloting or deploying generative AI, and 52% are using AI specifically in financial reporting. In the same survey, 92% of companies report their finance function's AI initiatives are meeting or exceeding ROI expectations.

Those are headline numbers. The more instructive finding is the composition of that ROI. KPMG's research identifies that adoption is most advanced and ROI most consistent in specific, bounded use cases: document processing, data extraction from structured sources, and workflow automation. The ROI is weakest and most uncertain in use cases that require the AI to generate novel analytical conclusions from unstructured data without grounded source verification.

The same research identifies the barriers most commonly cited by finance teams that are not achieving expected ROI. Data security and privacy concerns were identified by 56% of respondents as the biggest barrier to AI adoption, followed by limited skills and talent at 46%, difficulty gathering relevant and consistent data at 44%, and inadequate funding and investment at 43%. The data quality issue is structurally important: AI tools produce outputs only as reliable as the data they process, and financial reporting data is complex, period-specific, and highly sensitive to labelling and classification choices.

According to the KPMG US AI in Finance report, December 2024, 100% of US finance leaders report they expect to be either piloting or using AI in financial reporting within three years. The direction of travel is not in question. What remains contested is which applications of AI in financial analysis are reliable enough to support audit-grade outputs.

What Works: The Use Cases With Consistent Evidence Behind Them

The following use cases have consistent, research-supported evidence of reliable AI performance in financial analysis and reporting contexts. For each, the specific evidence base is identified.

Structured Document Processing and Data Extraction

The most consistently evidenced AI capability in financial analysis is the extraction of structured data from financial documents. When AI is applied to a clearly defined extraction task, pulling revenue figures from a specific line in a 10-K, identifying dates from filing headers, extracting XBRL-tagged values from structured financial statements, the accuracy rates are high and the error patterns are predictable.

Academic research published in 2025 and 2026 confirms that Retrieval-Augmented Generation (RAG) architectures, which ground AI outputs in specific retrieved source documents rather than generating from training data, substantially outperform ungrounded generation on financial document tasks. According to research from AAAI 2026, financial documents contain complex numerical relationships, temporal dependencies, and regulatory constraints that demand not only accurate retrieval but also mathematically precise reasoning and compliance validation. RAG systems designed for this environment achieve meaningfully higher accuracy than general-purpose language models on structured extraction tasks.

The practical implication for CFO teams: AI tools that retrieve and extract from identified source documents, with citations that allow the extracted output to be verified against the source, are appropriate for structured financial data work. AI tools that generate financial data from training knowledge, without grounding in specific retrieved documents, are not.

Peer Disclosure Research and Benchmarking

EDGAR-based peer benchmarking is a high-evidenced AI use case because the task has clear correctness criteria. Either the peer company's 10-K says X about revenue recognition or it does not. Either the disclosure language in the peer's filing covers cybersecurity governance with three paragraphs or one. These are retrievable, verifiable facts, not generated outputs.

AI tools that query the EDGAR database, retrieve the specific filing sections, and present the verbatim text with source attribution are performing a task with a clear factual ground truth. The output can be verified by the user against the EDGAR source in seconds. This is structurally different from an AI tool that summarises what peer companies generally say about a topic based on training data.

The PCAOB's Technology Innovation Alliance working group's 2024 report, cited by the CAQ's September 2025 Audit Insider, notes that technology tools used in financial reporting must produce outputs that auditors can evaluate for accuracy, completeness, and source reliability. EDGAR-sourced peer benchmarking meets this standard because each output has a traceable source.

Roll-Forward and Cross-Period Consistency Checking

AI applied to roll-forward tasks, updating a prior period filing for a new period, identifying what language has changed and what has not, and flagging unexplained divergences, performs a task with clear mechanical correctness criteria. The prior period document exists. The current period document exists. The question of whether language changed can be determined with high precision.

Cross-period consistency checking, identifying where the current draft describes a metric or business condition differently from the prior period without an explanatory narrative, is similarly well-defined. The task does not require the AI to form novel analytical conclusions. It requires comparison against an existing document, which is a high-reliability AI application.

Comment Letter Pattern Recognition

The SEC's comment letter archive contains structured correspondence with identifiable patterns. A comment about MD&A analysis adequacy has a recognisable linguistic structure. A comment about non-GAAP reconciliation completeness follows a predictable form. AI tools trained or prompted to recognise these patterns in the EDGAR correspondence archive and surface relevant examples for a user's current disclosure question are performing a retrieval and classification task with verifiable outputs.

Research examining Form S-4 comment letter patterns across large transaction samples confirms that comment letter activity clusters around predictable disclosure areas with consistent linguistic markers. AI tools that surface these patterns from the actual EDGAR correspondence archive, rather than generating summaries of what the SEC generally cares about, provide a reliable, citable research output.

What Does Not Work: Where the Evidence Shows Consistent Risk

The following use cases have consistent evidence of AI unreliability in financial analysis and reporting contexts. The risk patterns are not hypothetical. They are documented in peer-reviewed research and regulatory publications.

Ungrounded Generation of Financial Conclusions

The most significant documented risk of AI in financial analysis is hallucination: the generation of outputs that are factually incorrect, plausible-sounding, and not grounded in any retrieved source document. In the financial reporting context, this risk is not a minor quality issue. It is a material control failure.

Research evaluating general-purpose chatbots on legal and financial questions found hallucination rates in highly variable ranges depending on the tool and the specificity of the question. Stanford researchers reported in 2024 that general-purpose chatbots showed hallucination rates of 58% to 88% on legal questions. While financial analysis questions differ from legal questions, the structural similarity of both domains, requiring precise, sourced conclusions about technical regulatory requirements, makes the legal research findings highly relevant.

The specific pattern of financial hallucination is described in 2025 and 2026 academic research as distinct from the more commonly discussed forms. Research published in 2025 identifies what it calls "ecological errors" as the primary failure mode in financial AI: not the invention of fictitious facts, but precise mechanical failures such as selecting an adjacent temporal column in a financial table, or applying correct logic to incorrectly extracted variables. Financial hallucinations often follow specific patterns, including numerical miscalculations and temporal shifts, which generic benchmarks do not capture. A 0.5% error in a financial figure can represent millions of dollars in a material disclosure.

The implication for CFO teams: any AI tool used in financial reporting that generates numerical conclusions without retrieving and citing the specific source documents from which those numbers are drawn is introducing hallucination risk into the disclosure process. The standard for financial reporting outputs is not "probably correct." It is "verifiably correct against a cited source."

Autonomous Generation of Accounting Conclusions

AI tools that generate accounting conclusions, the appropriate ASC standard to apply, the correct classification of a transaction, the threshold for materiality in a specific context, without grounding those conclusions in retrieved, cited ASC guidance are inappropriate for use in financial reporting.

The PCAOB addressed this directly in its 2025 publication on AI in auditing. According to the PCAOB's 2025 statement on audit regulations, the lack of clear standards on what constitutes acceptable AI-based audit evidence creates a risk that AI-generated conclusions cannot be adequately evaluated by auditors. The PCAOB's concern is not that AI generates wrong answers in an obvious way. It is that AI-generated conclusions may not be traceable to the evidence that would allow an auditor to assess their reliability.

The FASB Accounting Standards Codification is the authoritative source for all US GAAP accounting guidance. An AI tool that tells a reporting team how to account for a lease modification under ASC 842 without citing the specific paragraph of the codification that supports that conclusion is providing an unverifiable output. An AI tool that retrieves the relevant ASC 842 paragraph, presents it verbatim, and maps it to the specific transaction facts provides an output that a technical accountant and an auditor can evaluate.

General-Purpose AI in High-Stakes Disclosure Drafting

Using general-purpose AI tools, those not specifically designed for SEC reporting with EDGAR and ASC grounding, to draft or substantially revise financial disclosures introduces two compounding risks: hallucination of regulatory requirements, and generation of disclosure language that is not benchmarked against what comparable registrants are actually filing.

At the December 2025 AICPA and CIMA Conference on Current SEC and PCAOB Developments, SEC Chairman Atkins and the Office of the Chief Accountant stressed their focus on understanding the use of AI in financial reporting, highlighted the importance of AI governance, and discussed new risks associated with the use of AI, including the explainability of AI models and emerging fraud risks. The SEC's concern about explainability is directly relevant to disclosure drafting: a disclosure prepared with AI assistance must be defensible to the SEC, which means every material conclusion in the disclosure must be traceable to its source.

The Harvard Law School Forum on Corporate Governance January 2026 analysis of the 2026 reporting season specifically identifies AI governance and the use of AI in financial reporting as areas of increasing SEC and PCAOB focus. Companies using AI in their disclosure process without documented governance frameworks, human oversight protocols, and source verification procedures are taking on regulatory risk that may not be visible until a comment letter or audit deficiency finding surfaces it.

The Architectural Difference That Determines Reliability

The evidence points to a single architectural distinction that separates AI tools appropriate for financial analysis from those that are not. The distinction is whether the tool grounds every output in retrieved, cited source documents or generates outputs from training knowledge.

This is the difference between a retrieval-augmented system and a generative system. In a retrieval-augmented system, the AI retrieves specific documents, the relevant EDGAR filing section, the applicable ASC codification paragraph, the SEC comment letter exchange, and grounds its output in those retrieved documents. Every conclusion is linked to a specific source that the user can verify. The AI's role is retrieval, organisation, and synthesis of verifiable material.

In a generative system, the AI generates outputs based on patterns learned during training. Those outputs may be accurate, and often are, but they are not traceable to a specific source. The user cannot verify them against a document. The auditor cannot evaluate their evidence basis. The disclosure committee cannot cite them in their pre-filing review documentation.

For SEC disclosure work, this distinction is not a product preference. It is a control requirement. The Section 302 certification that the CFO and CEO sign at the end of every 10-K and 10-Q period certifies that the disclosure controls and procedures are designed and operating effectively to ensure material information is recorded, processed, summarised, and reported accurately. AI-generated outputs that cannot be traced to their source evidence are not consistent with effective disclosure controls.

What This Means for How You Evaluate AI Tools

The evidence establishes a practical evaluation framework for CFO teams assessing AI tools for financial analysis and reporting. Four questions determine whether an AI tool meets the standard.

Does every output cite a specific source? The output should identify the specific EDGAR filing, ASC codification paragraph, or SEC correspondence that supports each conclusion. If the tool generates a conclusion without citing a source, that conclusion cannot be used in a disclosure workflow without independent verification.

Can the cited source be retrieved and verified by the user? The citation is only meaningful if the user can access the source document and confirm that the cited text says what the AI output claims it says. Tools that cite sources without providing access to those sources, or that generate synthetic citations, fail this test.

Is the tool's training and grounding scope limited to the relevant data universe? A tool grounded specifically in EDGAR filings, ASC guidance, and SEC correspondence produces outputs in a bounded, verifiable domain. A general-purpose tool trained on internet data produces outputs in an unbounded domain where financial accuracy cannot be systematically assured.

Does the tool's governance model support human oversight and audit trail documentation? The PCAOB and SEC have both signalled that AI governance, explainability, and human oversight are evaluation criteria for AI use in financial reporting. A tool that generates outputs without creating an audit trail of what sources were retrieved, what the AI produced, and what a human reviewer verified does not support the governance documentation that regulators expect.

How Ask Fina Applies This Evidence Framework

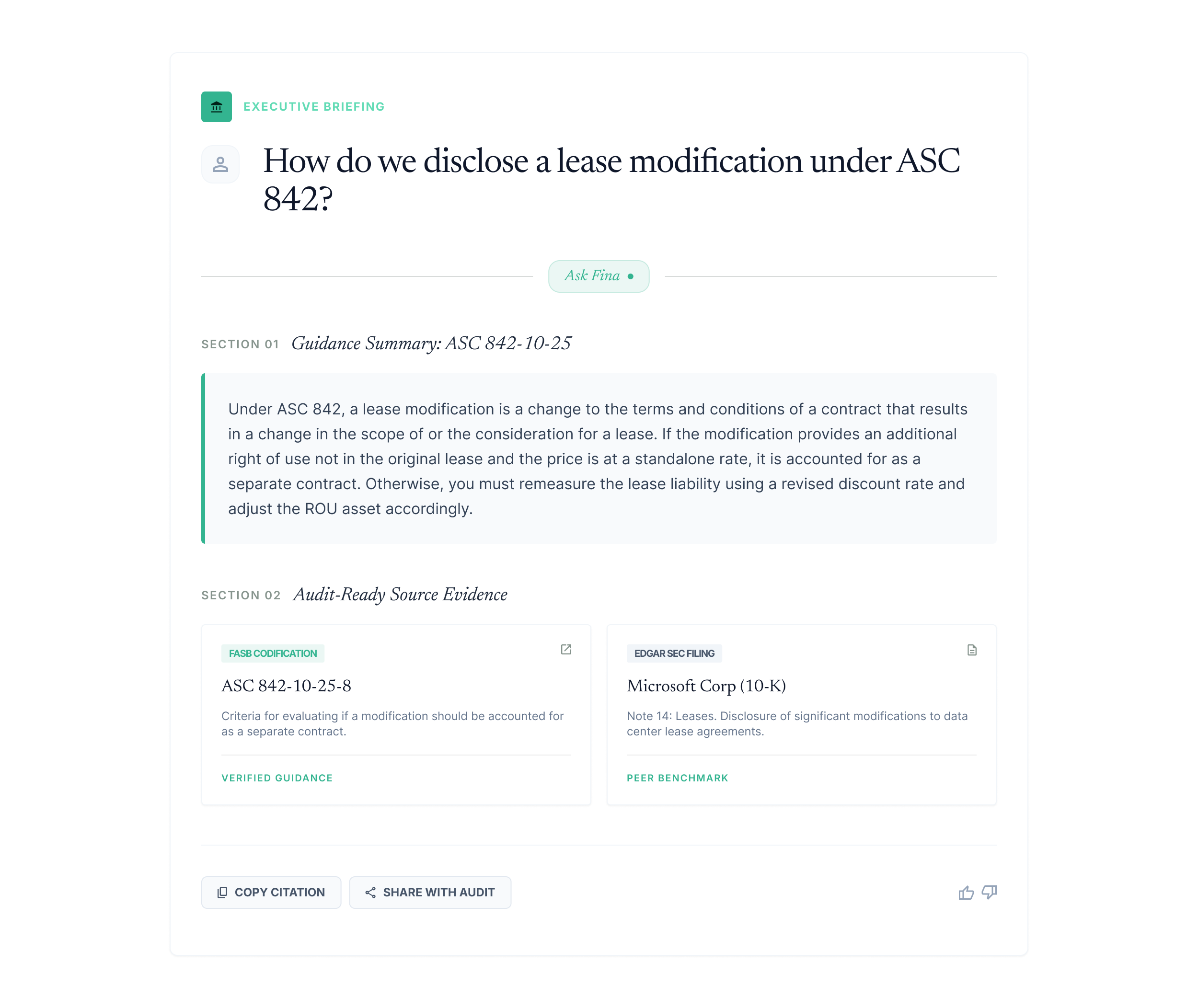

Finrep's Ask Fina is the AI assistant built specifically to meet the evidence standard described above. Every answer Ask Fina provides is grounded in retrieved, cited sources from the relevant financial reporting data universe: EDGAR filings, ASC guidance from the FASB codification, SEC comment letter correspondence, and SEC regulatory publications.

When a Controller asks Ask Fina how to disclose a lease modification under ASC 842, the response retrieves the applicable ASC 842 paragraph, presents the relevant guidance verbatim with codification attribution, and surfaces how comparable registrants have disclosed similar lease modifications in their EDGAR filings. The user sees the source. The auditor can evaluate the source. The disclosure committee can document in their pre-filing review that the conclusion was grounded in cited, verifiable guidance.

When an SEC Reporting Manager asks Ask Fina about the SEC's current comment focus on cybersecurity governance disclosures, the response retrieves recent comment letter exchanges from the EDGAR correspondence archive, organised by industry and recency, with the SEC's exact question and the company's accepted response. This is evidence that a human reviewer can assess and a disclosure team can act on.

This is what Finrep's evidence-first philosophy means in practice. Not a claim that AI produces accurate outputs. A structural commitment to grounding every AI output in retrieved, cited, auditor-traceable source material that meets the evidence standard that public company disclosure work requires.

The practical output: Ask Fina answers take minutes rather than the hours a manual EDGAR or codification search requires, and every answer arrives with the source citations that allow your team, your auditors, and your disclosure committee to verify and document the basis for each conclusion.

The Regulatory Trajectory: Where the SEC and PCAOB Are Heading on AI

Understanding where regulation is going on AI in financial reporting matters for teams making multi-year technology commitments. The direction of both the SEC and the PCAOB is toward more governance, more explainability, and more human oversight of AI-generated outputs, not less.

The PCAOB's Technology Innovation Alliance working group 2024 recommendations specifically addressed the need for standards on what constitutes acceptable AI-based audit evidence. The absence of those standards is currently creating uncertainty for audit firms about how to evaluate AI-assisted financial reporting. The standards, when they arrive, will formalise requirements for source grounding, output traceability, and human oversight that leading AI tools should already be meeting.

At the SEC level, the 2026 reporting season analysis from the Harvard Law Forum confirms that AI governance in financial reporting is an active focus area for both the Division of Corporation Finance and the Office of the Chief Accountant. Companies that have adopted AI tools without documented governance frameworks, human oversight protocols, and source verification procedures are likely to find those gaps surfacing in comment letters or in auditor management letters as the regulatory framework matures.

The teams that are best positioned are those that adopted AI tools meeting the evidence standard described in this post, grounded in cited sources, governed by human oversight, and supported by audit trail documentation, before the formal regulatory requirements arrived. The governance framework that Finrep's platform supports is not anticipating a future requirement. It is meeting the standard that the evidence already supports as appropriate for public company disclosure work.

According to the PCAOB Auditing Standards, auditors are required to evaluate the reliability of evidence used to support financial reporting conclusions. AI-generated outputs that cannot be traced to specific, verifiable sources do not meet the evidence reliability standard that auditing standards require. This is not a future risk. It is a current audit quality issue that is receiving increasing attention in PCAOB inspection programmes.

Frequently Asked Questions

What does the evidence show about AI accuracy in financial analysis?

The evidence shows a significant performance gap between AI applied to bounded, source-grounded tasks and AI applied to open-ended generation tasks. In structured document extraction, EDGAR-based peer benchmarking, and roll-forward tasks, retrieval-augmented AI systems demonstrate high accuracy because every output can be verified against the retrieved source. In ungrounded generation of financial conclusions, accounting guidance, or disclosure language, hallucination rates documented in academic research range from material to severe depending on the tool and the specificity of the question. The specific failure mode in financial AI, documented in 2025 and 2026 research, is precise mechanical errors such as temporal column shifts and incorrect variable extraction, which are harder to detect than obvious fabrications.

Why do the SEC and PCAOB care about AI governance in financial reporting?

The SEC's Division of Corporation Finance and the PCAOB have both identified AI governance as an active oversight area in 2025 and 2026. The SEC's concerns, articulated at the December 2025 AICPA and CIMA Conference, centre on explainability: AI-generated outputs used in financial disclosures must be traceable to verifiable sources in a way that allows the SEC to evaluate the basis for the conclusions reached. The PCAOB's concern is the audit evidence standard: auditors must be able to evaluate the reliability of AI-generated outputs, which requires source grounding and output traceability that many current AI tools do not provide.

What is the difference between RAG-based AI and generative AI for financial reporting?

Retrieval-Augmented Generation (RAG) grounds AI outputs in specific retrieved source documents. The AI retrieves the relevant EDGAR filing section, ASC codification paragraph, or SEC correspondence, and bases its output on that retrieved material. Every conclusion is linked to a verifiable source. Generative AI produces outputs based on patterns learned during training without retrieving or citing specific sources. For financial reporting, RAG-based systems are appropriate because their outputs are verifiable. Purely generative systems are inappropriate for disclosure work because their outputs cannot be systematically verified against source evidence.

What AI use cases in financial reporting have the strongest evidence of reliable performance?

The use cases with the strongest evidence of reliable AI performance are structured document extraction from EDGAR filings with source citations, EDGAR-based peer disclosure benchmarking with verbatim source language, roll-forward and cross-period consistency checking against prior period documents, and comment letter pattern recognition from the EDGAR correspondence archive. All of these are bounded retrieval and classification tasks with verifiable correct answers. The use cases with the weakest evidence of reliable performance are autonomous generation of accounting conclusions without ASC grounding, general-purpose disclosure drafting without EDGAR benchmarking, and open-ended financial analysis without source verification.

How does Ask Fina differ from general-purpose AI tools for financial analysis?

Ask Fina grounds every response in retrieved, cited source material from the relevant financial reporting data universe: EDGAR filings, ASC guidance from the FASB codification, and SEC comment letter correspondence. Every answer includes the specific source that supports the conclusion, which the user can retrieve and verify. General-purpose AI tools generate responses from training data without retrieving or citing specific sources, which means their financial analysis outputs cannot be systematically verified against source evidence and do not meet the audit trail standard required for public company disclosure work.

What should CFO teams look for when evaluating AI tools for financial reporting?

Four criteria determine whether an AI tool is appropriate for financial reporting work. First, every output must cite a specific retrievable source. Second, the cited source must be accessible to the user for verification. Third, the tool's grounding scope must be limited to the relevant data universe of EDGAR filings, ASC guidance, and regulatory publications. Fourth, the tool must support human oversight and create an audit trail of what sources were retrieved, what the AI produced, and what a human reviewer verified. Tools that do not meet all four criteria introduce control risk into the disclosure process that is inconsistent with the Section 302 certification standard.

Key Takeaways

- The evidence on AI in financial analysis is more nuanced than vendor claims suggest. AI performs reliably in bounded, retrieval-based tasks with verifiable source grounding. It introduces material risk in ungrounded generation of financial conclusions, accounting guidance, and disclosure language.

- KPMG's December 2024 global survey of 2,900 finance executives confirms 52% of companies are already using AI in financial reporting and 92% report ROI meeting or exceeding expectations. The ROI evidence is strongest for document processing and workflow automation, not for open-ended financial analysis generation.

- Academic research documents that AI hallucination in financial contexts takes the form of precise mechanical errors, temporal column shifts, incorrect variable extraction, that are harder to detect than obvious fabrications and can represent material amounts in a disclosure context.

- The SEC and PCAOB have both identified AI governance, explainability, and human oversight as active oversight priorities for 2025 and 2026. Companies using AI without documented governance frameworks and source verification procedures face regulatory exposure as formal requirements develop.

- The architectural distinction that matters is retrieval-augmented grounding versus ungrounded generation. Tools grounded in cited, verifiable source documents meet the audit evidence standard. Tools that generate outputs from training knowledge do not.

- Finrep's Ask Fina applies the evidence standard directly: every response is grounded in retrieved, cited sources from EDGAR, the ASC codification, and SEC correspondence, creating the audit trail and source traceability that public company disclosure work requires.

Finrep is an AI-powered financial disclosure intelligence platform for the Office of the CFO. 40 purpose-built AI agents grounded in EDGAR filings, ASC guidance, and SEC correspondence. SOC2 Type II and ISO 27001 certified. Zero data residency. Your data is never retained or used to train models. Backed by Accel. Trusted by CFO teams at FOX, Roku, HP, RingCentral, Wells Fargo, and Infosys.

For further reading on how evidence-first AI applies in specific financial reporting workflows, see the complete guide to SEC forms 10-K, 10-Q, and 8-K and how AI changes each workflow and how to use SEC comment letter history to pressure-test your disclosures before filing