Most public company teams know EDGAR as the place you submit filings. Fewer use it as the research database it actually is.

Every 10-K, 10-Q, 8-K, proxy statement, and comment letter correspondence ever filed by a public company in the United States is stored in EDGAR and publicly searchable. That is more than 10 million documents spanning three decades of corporate disclosure. For disclosure teams who know how to search it, EDGAR is one of the most powerful competitive intelligence tools available anywhere, and it costs nothing to access.

The teams that use it well benchmark their disclosure language against peers before filing, not after receiving a comment letter. They know what market-standard disclosure looks like for any topic before they draft a word. They can pull verbatim language from 20 comparable registrants in the same SIC code in the time it used to take to find one.

By the end of this post, you will understand exactly what the EDGAR database contains, how it is structured, which search tools give you the most useful results for benchmarking work, and how Finrep's Grid Reports feature transforms raw EDGAR data into a structured peer comparison your team can act on immediately.

What Is the SEC EDGAR Database?

EDGAR, which stands for Electronic Data Gathering, Analysis, and Retrieval, is the SEC's official system for receiving, processing, and providing public access to filings submitted by public companies, mutual funds, and other entities registered with the SEC. It has been the primary submission and disclosure channel for domestic public companies since the early 1990s and for foreign private issuers since 2002.

According to the SEC's EDGAR overview, the database currently holds filings from over 30,000 active and historical registrants. New filings are added daily and made publicly available within minutes of SEC acceptance. There is no subscription fee, no login required for public access, and no restriction on how the data can be used for research purposes.

What EDGAR contains goes well beyond the periodic reports most teams think of first. The database holds every form type the SEC accepts, including annual reports (10-K), quarterly reports (10-Q), current event reports (8-K), proxy statements (DEF 14A), registration statements (S-1, S-3, S-4), beneficial ownership reports (Schedules 13D and 13G), insider transaction reports (Forms 3, 4, and 5), and the complete SEC comment letter correspondence archive (UPLOAD and CORRESP form types). For disclosure benchmarking purposes, the periodic reports and the comment letter archive are the two most useful data sets.

The scope of what EDGAR makes available is worth sitting with for a moment. Your direct competitors, your closest disclosure peers, every company in your SIC code that has been public for the last thirty years, has filed every material disclosure they have ever made into this database. The disclosure language they settled on after SEC review, the risk factors they added after a cybersecurity incident, the MD&A structure that satisfied auditors and the SEC simultaneously, all of it is in EDGAR and all of it is searchable.

How EDGAR Is Structured: What You Need to Know Before You Search

Understanding EDGAR's structure saves significant time in any research workflow. The database organises filings by registrant, form type, and filing date. Every registrant is assigned a unique CIK (Central Index Key) number that functions as their permanent identifier in the system. Every filing is also assigned an accession number, a unique identifier for that specific submission.

The EDGAR filing index for any company is accessible at a standard URL pattern: edgar.sec.gov/cgi-bin/browse-edgar?action=getcompany&CIK=[CIK number]. From the filing index, you can filter by form type and date range to find specific filings quickly.

Within each filing, the documents are presented as a filing index listing every file included in the submission: the primary HTML or XBRL document, exhibits, financial statements, and any amendments. Understanding this structure matters for benchmarking because the section you want is often in a specific exhibit or in a specific part of the primary document, and navigating directly to it is faster than reading the entire filing.

There are three search interfaces that disclosure teams use most frequently, each suited to different research tasks.

EDGAR Full-Text Search at efts.sec.gov searches the complete text of all filings. This is the most powerful interface for benchmarking work because it finds the specific language you are looking for across the entire database, not just within a single company's filings. You can search for a disclosure concept using the SEC's own terminology and retrieve every filing in the database where that exact language or topic appears. Full-text search also supports filtering by form type, date range, and company name, which means you can narrow results to a specific filing type within a specific time window across your defined peer group.

EDGAR Company Search at sec.gov/cgi-bin/browse-edgar searches by company name, CIK number, ticker symbol, or SIC code. The SIC code filter is the most useful for building peer groups. Entering a SIC code and filtering by form type returns a list of every registrant in that industry classification that has filed that form type, which is the foundation of any systematic benchmarking exercise.

EDGAR Full-Text Search API provides programmatic access to the same full-text search capabilities, useful for teams building automated research workflows or working with large peer groups. The API documentation is available through the SEC's developer resources.

What Peer Benchmarking on EDGAR Actually Means in Practice

Peer benchmarking using EDGAR means using the database to answer a specific question about your own disclosure: how does what we are disclosing compare to what our peers are disclosing on the same topic, in the same filing type, in the current period?

That question sounds simple. In practice, it has several layers that disclosure teams navigate differently depending on their resources and experience.

Defining your peer group is the first decision. For most benchmarking purposes, a peer group has two components: companies in the same SIC code or sub-industry, and companies of comparable size by revenue or market cap. The SIC code filter in EDGAR Company Search gives you the industry component. The size filter requires an additional step, either pulling financial data from a separate source or reviewing the filings themselves.

The peer group for MD&A benchmarking may differ from the peer group for risk factor benchmarking. For MD&A, you want companies facing similar operational and market conditions. For risk factor benchmarking, you may want a broader set that includes companies that have recently navigated specific risk events, a cybersecurity incident, a restatement, a significant acquisition, regardless of size or sub-industry.

Selecting the right filing period is the second decision. For benchmarking current disclosure language, you want filings from the most recent completed period for each peer. For understanding how disclosure practices have evolved, you may want to pull filings from two or three periods. EDGAR's date range filter makes this straightforward.

Extracting the relevant section from each filing is where most teams lose time. A 10-K can be 150 to 300 pages. The section you want for MD&A benchmarking is Part II, Item 7. The section you want for risk factor benchmarking is Part I, Item 1A. For a peer group of 12 companies, manually navigating to and extracting the relevant section from 12 separate filings takes the better part of a day, and the output is 12 separate documents that still need to be compared.

Comparing and synthesising the extracted sections is the final step and the one that requires the most judgment. What you are looking for is: what topics does this disclosure cover that ours does not? What level of quantitative specificity do peers provide where we use qualitative language? What structural choices do peers make that we could adopt? How many paragraphs do peers devote to this topic compared to us?

This is analytically demanding work. It requires reading comprehensively, maintaining a structured comparison framework across all peer filings, and synthesising the findings into actionable conclusions for your own draft. Done manually for a peer group of 10 to 12 companies across three or four disclosure topics, this is a multi-day exercise that most teams can only afford to run once per filing cycle.

The Five Benchmarking Use Cases Where EDGAR Research Has the Most Impact

Not all disclosure sections benefit equally from peer benchmarking. The following five are where EDGAR research consistently changes the quality of the output and reduces SEC comment letter risk.

MD&A narrative structure and depth. The SEC's most common comment trigger across all periodic report types is MD&A that describes results without explaining the reasons behind them. Peer benchmarking reveals not just how comparable companies describe similar results, but how they structure the analytical narrative: which metrics they lead with, how they connect operational drivers to financial outcomes, and how much forward-looking context they provide. Teams that benchmark their MD&A structure against peers before filing consistently produce more analytically complete sections.

Risk factor specificity and coverage. Generic risk factors, those that could apply to any public company in any industry, are an increasing SEC scrutiny focus. Peer benchmarking shows you which risk categories your peer group covers, how specifically they describe each risk, and which emerging risks, AI-related governance, supply chain concentration, climate-related financial impacts, peers have added recently that you have not yet addressed. This is the most time-efficient way to identify risk factor gaps before filing.

Non-GAAP measure presentation and reconciliation. The format, labelling, and reconciliation structure of non-GAAP measures varies across companies in ways that reflect both company preference and SEC review feedback. Benchmarking how your peer group presents Adjusted EBITDA, Free Cash Flow, or other non-GAAP metrics reveals the current market standard for prominence, reconciliation structure, and explanatory language, which is directly relevant to the SEC's scrutiny of non-GAAP compliance.

Revenue recognition policy footnote depth. Revenue recognition policy disclosures under ASC 606 (Revenue from Contracts with Customers) vary significantly in depth and specificity across companies with similar business models. For companies with complex revenue streams, the gap between thin and comprehensive ASC 606 footnotes is often a direct predictor of whether the SEC will ask for additional disclosure. Peer benchmarking the footnote length, the topics covered, and the level of quantitative specificity provided gives you a clear target for your own disclosure depth.

Cybersecurity risk factor and governance language. Since the SEC's December 2023 cybersecurity disclosure rules took effect, risk factor sections and Item 1C (Cybersecurity) disclosures have been an active SEC review priority. The range of disclosure depth and specificity across companies in the same sector is wide, and the direction of travel is clearly toward more specificity. Benchmarking the most recently filed peer disclosures in this area shows you both the current floor and the current ceiling, so your team can make an informed decision about where your disclosure should sit.

How Finrep's Grid Reports Transform EDGAR Benchmarking

The manual EDGAR benchmarking process described above is valuable but slow. Building a peer group, locating the right sections across 10 to 12 filings, extracting the relevant language, and organising it into a structured comparison takes two to three days of focused analyst work per disclosure topic. For a filing team running a full benchmarking exercise across five topics before a 10-K, that is the better part of two weeks.

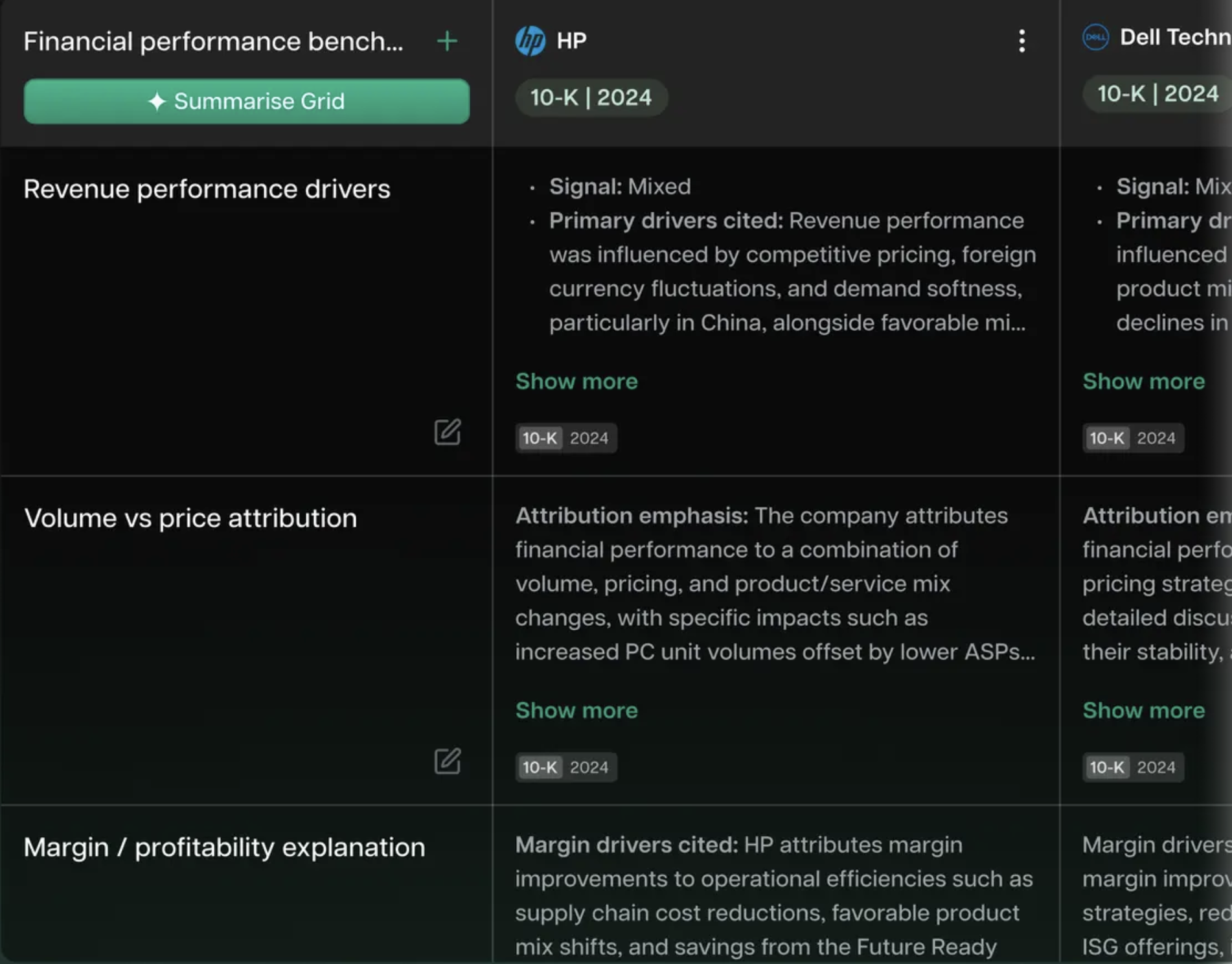

Finrep's Grid Reports feature is built specifically to compress this workflow. The output is a structured, side-by-side grid view of how your defined peer group addresses any disclosure topic, with verbatim EDGAR language from each company, coverage depth comparison, and your own draft positioned in the same view for direct comparison.

Here is what the workflow looks like in practice.

Define your peer group and topic. You specify the companies you want to benchmark against and the disclosure topic you are researching. This can be a named disclosure section (MD&A liquidity discussion, risk factor cybersecurity, ASC 606 revenue policy) or a specific concept using EDGAR search language.

Grid Reports pulls the relevant sections from each peer's most recent filing. Rather than navigating 12 separate EDGAR filing indexes and extracting sections manually, Finrep retrieves the relevant content from each peer's filing automatically. The result is organised into a grid with one row per peer company and columns covering the key dimensions of the disclosure: topics covered, quantitative specificity, structural choices, and word count.

Your draft sits in the same grid. Rather than comparing your draft to peer filings in separate documents, your current draft language is inserted into the grid view alongside the peer data. You can see immediately where your disclosure is thinner than the peer group, where you are more detailed, and which topics peers cover that your draft does not address.

The grid is exportable and citeable. Every cell in the grid links back to the source EDGAR filing, with the specific section and filing date identified. When a reviewer asks why a specific disclosure change was made, the answer is documented: your team benchmarked against the peer group and found that your prior language was materially thinner than market practice on this specific topic. That is a defensible, documented basis for the change.

According to Finrep client data, 2025, teams using Finrep's benchmarking tools reduce the research and benchmarking portion of their SEC reporting preparation from 10 days to 3 to 4 days, with 60 to 70% fewer review loops with auditors.

Request access to Finrep's Grid Reports feature

Building a Repeatable EDGAR Benchmarking Process

The disclosure teams that get the most value from EDGAR benchmarking do it systematically rather than ad hoc. A repeatable process built into the filing calendar produces better results than a one-time exercise done under deadline pressure.

Set your peer group once and review it annually. Your benchmarking peer group should be defined at the start of the fiscal year and reviewed annually. Companies leave the public markets, get acquired, or change their business model. A peer group that made sense two years ago may not reflect your current competitive and disclosure environment. Finrep's Peer Alignment agent can help maintain this peer list by flagging when peer group companies make material changes to their disclosure structure.

Assign benchmarking ownership by section. In most CFO offices, different people own different sections of the filing. MD&A is typically owned by FP&A or SEC reporting. Risk factors are typically owned by legal or the disclosure committee. Assigning EDGAR benchmarking responsibility to the section owner, rather than running it as a centralised exercise, produces more relevant and actionable findings.

Run benchmarking before drafting, not after. The highest-value use of EDGAR benchmarking is as input to the drafting process, not as a review step after the draft is complete. If you know before you write a word that your peer group uses three paragraphs of quantitative analysis in their liquidity discussion, you draft to that standard from the start rather than rewriting after the fact.

Document the findings. For each benchmarking exercise, maintain a brief record of what was compared, what the peer group showed, and what changes were made to your draft in response. This documentation supports your disclosure controls certification and provides the basis for a defensible response if the SEC raises a question about a specific disclosure section.

Frequently Asked Questions

What is the SEC EDGAR database and who can access it?

EDGAR, the SEC's Electronic Data Gathering, Analysis, and Retrieval system, is the official repository for all public filings submitted to the SEC by public companies, mutual funds, and other registered entities. It contains more than 10 million filings spanning over three decades, including 10-K and 10-Q annual and quarterly reports, 8-K current event reports, proxy statements, registration statements, beneficial ownership reports, and the complete SEC comment letter correspondence archive. Access is entirely free and requires no login or subscription. The main search interface is available at sec.gov/edgar/search.

How do I search EDGAR for peer company disclosures?

The most effective search interface for peer benchmarking is EDGAR Full-Text Search, which searches the complete text of all filings. Enter the disclosure concept you want to research using SEC terminology, filter by form type (10-K or 10-Q), set your date range to the most recent 12 to 24 months, and add company name filters for your defined peer group. For building a peer group by industry, use EDGAR Company Search with the SIC code filter to find all registrants in your sector.

What disclosure topics benefit most from EDGAR peer benchmarking?

The five areas where peer benchmarking produces the most consistent impact on disclosure quality and SEC comment letter risk are MD&A narrative structure and depth, risk factor specificity and coverage, non-GAAP measure presentation and reconciliation format, revenue recognition policy footnote depth under ASC 606, and cybersecurity risk factor and governance language. These are also the five areas where the SEC's review attention is most consistently focused, making them the highest-priority topics for any systematic benchmarking programme.

How does Finrep's Grid Reports feature differ from manual EDGAR research?

Manual EDGAR benchmarking requires navigating individual company filing indexes, identifying and extracting the relevant sections, and building a comparison framework by hand across all peer filings. Finrep's Grid Reports retrieves the relevant sections from each peer's most recent filing automatically, organises them into a structured side-by-side grid with verbatim EDGAR language, and positions your current draft in the same view for direct comparison. Every cell links back to the source EDGAR filing. Research that takes two to three days manually takes a fraction of that time with Grid Reports, with a structured, citable output rather than a collection of documents.

How often should disclosure teams run EDGAR benchmarking exercises?

For annual 10-K preparation, a full benchmarking exercise across your five highest-priority disclosure topics should be completed four to six weeks before your planned filing date, with enough time to incorporate the findings into the draft. For 10-Q preparation, a targeted benchmarking exercise on any disclosure area that changed materially during the quarter is appropriate. Risk factor benchmarking specifically should be updated at least annually, with a targeted review whenever a significant market event or regulatory development affects your sector. According to the Harvard Law School Forum on Corporate Governance, leading disclosure committees now treat peer benchmarking as a standard pre-filing agenda item rather than an occasional research exercise.

Is EDGAR data reliable for benchmarking purposes?

EDGAR data is the most reliable source available for disclosure benchmarking because it contains only SEC-accepted filings. Every document in EDGAR has been submitted by a registrant, reviewed for completeness by EDGAR's automated processing system, and in many cases reviewed by the SEC's Division of Corporation Finance staff. The language that persists in a company's filings across multiple periods without generating a comment letter represents the SEC's implicit acceptance of that disclosure approach. For benchmarking purposes, this is a stronger signal than any secondary research source.

Key Takeaways

- The SEC EDGAR database contains more than 10 million public filings spanning three decades. For disclosure teams that know how to use it, EDGAR is the most comprehensive and authoritative peer benchmarking resource available, at no cost.

- EDGAR's Full-Text Search interface and Company Search with SIC code filtering are the two most useful tools for building structured peer benchmarking research. The comment letter correspondence archive, accessible through the CORRESP and UPLOAD form types, adds a layer of SEC review intelligence on top of the peer filing data.

- The five disclosure areas where EDGAR benchmarking has the most consistent impact on quality and comment letter risk are MD&A depth, risk factor specificity, non-GAAP presentation, ASC 606 footnote coverage, and cybersecurity governance language.

- Manual EDGAR benchmarking is thorough but takes two to three days per disclosure topic for a peer group of 10 to 12 companies. Finrep's Grid Reports compresses this into a structured, side-by-side grid with verbatim EDGAR language, your draft in the same view, and source links for every cell.

- The teams that get the most value from EDGAR benchmarking run it systematically before drafting, not as a review step after the draft is complete. A defined peer group, assigned section ownership, and documented findings are the foundations of a repeatable process.

Finrep is an AI-powered financial disclosure intelligence platform for the Office of the CFO. 40 purpose-built AI agents for SEC reporting, technical accounting, investor relations, legal counsel, and disclosure committee functions. SOC2 Type II and ISO 27001 certified. Zero data residency. Backed by Accel. Trusted by CFO teams at FOX, Roku, HP, RingCentral, Wells Fargo, and Infosys.

For further reading on how AI accelerates EDGAR research for disclosure teams, see how Finrep compares to Intelligize for EDGAR research and financial disclosure benchmarking and the Section 16 deadline guide for Foreign Private Issuers.