AI adoption and cyber threats are expanding the scope of board-level oversight. As these risks grow in complexity and regulatory scrutiny intensifies, inadequate oversight is no longer just a management issue — it carries fiduciary consequences.

The Wake-Up Call: Why Now?

Boards face mounting pressure to demonstrate AI and cybersecurity oversight due to new SEC cybersecurity disclosure rules requiring four-day incident reporting, intensified scrutiny from proxy advisory firms like ISS and Glass Lewis, and growing shareholder demands for verified technology expertise among directors. Failure to show robust oversight now carries fiduciary, regulatory, and reputational consequences.

Shareholders are increasingly demanding detailed disclosure on technology oversight. Proxy advisory firms like ISS and Glass Lewis are scrutinizing board technology expertise with increasing intensity. The SEC adopted its cybersecurity disclosure rules in July 2023, requiring companies to report material cybersecurity incidents on Form 8-K within four business days (SEC, 2023). Meanwhile, AI governance has moved from an IT concern to a strategic business risk. According to a Deloitte survey, 62% of organizations now classify AI as a board-level risk area (Deloitte, 2024).

SEC Chair Gary Gensler stated in a 2023 address that "cybersecurity is an emerging risk that public companies need to disclose to investors, and investors need to be able to evaluate that risk." Boards that do not demonstrate robust AI and cyber oversight face regulatory penalties, shareholder lawsuits, and reputational damage.

The Director's Dilemma

According to the National Association of Corporate Directors (NACD) 2024 Board Practices Survey, 65% of directors report they lack sufficient expertise to effectively oversee AI and cybersecurity risks (NACD, 2024). Yet these same directors are legally responsible for ensuring adequate controls exist. This knowledge gap creates material liability exposure.

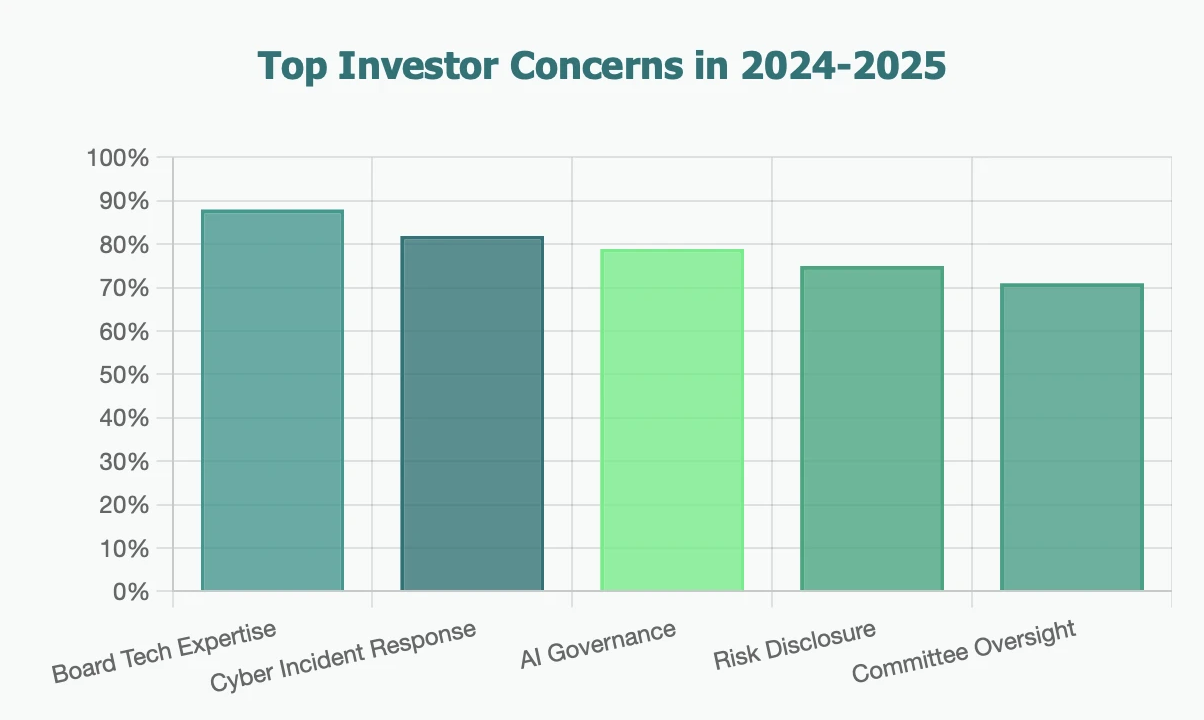

What Investors Are Scrutinizing in Proxy Statements

Institutional investors evaluate four critical elements in proxy statements: board composition with verified technology and cybersecurity credentials, committee structures with dedicated AI and cyber oversight assignments, enterprise-level risk assessment frameworks for identifying and mitigating technology risks, and documented incident response protocols with tested crisis management playbooks.

Institutional investors evaluate four critical elements in proxy statements:

- Board Composition: Does the board include directors with genuine technology, AI, or cybersecurity credentials? Vague "digital experience" isn't cutting it anymore.

- Committee Structure: Has the board established dedicated oversight mechanisms—whether through specialized committees or clear assignments to existing committees?

- Risk Assessment Framework: Can the company articulate how it identifies, measures, and mitigates AI and cyber risks at the enterprise level?

- Incident Response Protocols: Does the board have visibility into threat landscapes and is there a tested playbook for crisis management?

The AI Governance Imperative

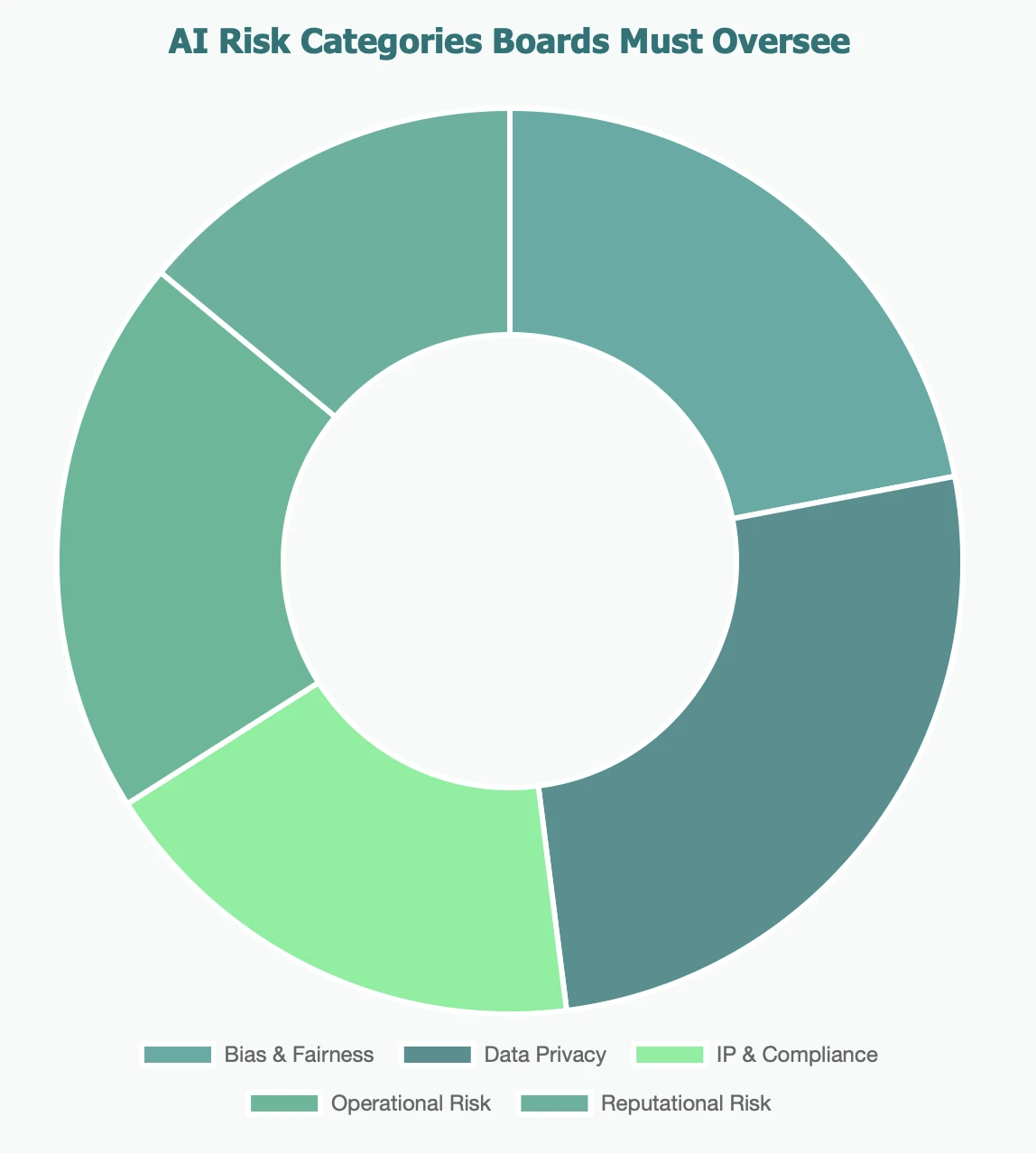

AI governance has become a strategic board-level priority because generative AI introduces risks spanning algorithmic bias, intellectual property infringement, privacy violations, and operational reliability. Leading companies are responding by establishing AI Ethics Committees, appointing Chief AI Officers with direct board reporting lines, and implementing AI impact assessments for high-stakes applications as baseline governance requirements.

Generative AI has significantly altered the risk landscape. Companies deploying AI systems face challenges ranging from algorithmic bias and intellectual property infringement to privacy violations and operational reliability issues. EY's 2024 Global Board Risk Survey found that only 35% of boards have a formal AI governance framework in place (EY, 2024). Yet many boards still treat AI as a tactical IT decision rather than a strategic governance priority.

Progressive companies are establishing AI Ethics Committees, appointing Chief AI Officers who report directly to the board, and implementing AI impact assessments for high-stakes applications. These are becoming baseline expectations for responsible corporate governance.

Cyber Oversight: Beyond Compliance Theater

Effective board-level cybersecurity oversight now requires continuous monitoring dashboards rather than quarterly briefings, regular tabletop exercises simulating ransomware and supply chain attacks, tested incident response protocols, and integration of cyber risk discussions into strategic planning. Boards should also ensure at least two directors hold verified cybersecurity or technology credentials.

The cybersecurity landscape has matured beyond checkbox compliance. According to IBM's 2024 Cost of a Data Breach Report, the average cost of a data breach reached $4.88 million globally in 2024 (IBM, 2024). With ransomware attacks disrupting critical infrastructure, supply chain vulnerabilities exposing entire ecosystems, and nation-state actors targeting intellectual property, boards need real-time threat intelligence and scenario planning. PCAOB Chair Erica Williams has noted that "audit committees must understand how technology risks could affect the reliability of financial reporting."

Leading boards are moving from quarterly briefings to continuous monitoring dashboards. They're conducting tabletop exercises that simulate ransomware attacks, testing incident response protocols, and ensuring cyber risk discussions are integrated into strategic planning—not relegated to audit committee afterthoughts.

💡 Best Practice Spotlight

Top-performing boards dedicate at least one full board meeting annually to deep-dive technology risk sessions, bringing in external experts to stress-test assumptions and challenge management's risk appetite. They also ensure at least two directors have significant cybersecurity or technology credentials verified through formal training or professional experience.

Crafting Your Proxy Statement Disclosure

Strong proxy statement disclosure on AI and cyber oversight should include specific director credentials such as CISO roles or AI product development experience, clear committee charter assignments for technology governance, evidence of active oversight through documented board discussions and decisions, and explicit links showing how AI and cyber considerations influence capital allocation and strategic positioning.

Effective proxy statement disclosure on AI and cyber oversight includes:

- **Be Specific About Expertise: **Don't just say "technology experience." Detail the director's background—CISO roles, AI product development, enterprise security architecture.

- Explain Your Structure: Describe which committee owns AI governance versus cyber oversight, meeting frequency, and external advisor engagement.

- **Demonstrate Active Oversight: **Highlight key discussions, decisions made, investments approved, and how the board responded to emerging threats.

- Link to Strategy: Show how AI and cyber considerations influence capital allocation, M&A decisions, and competitive positioning.

The Bottom Line

In 2025 and beyond, AI and cybersecurity oversight aren't specialized topics for tech companies—they're fundamental governance responsibilities for every board. Your proxy statement is the most visible indicator of whether your board is prepared for this reality or dangerously behind.

Action Steps for Directors

- If you're preparing for your next proxy season, now is the time to evaluate your board's readiness:

- Conduct a skills gap analysis focused specifically on AI and cyber expertise

- Consider recruiting directors with relevant technical credentials

- Establish clear committee charters that assign AI and cyber oversight responsibilities

- Implement board education programs on emerging technologies and threat landscapes

- Review your proxy statement disclosure with fresh eyes—would an institutional investor be satisfied?

The expectation is clear: boards must demonstrate meaningful AI and cyber oversight. KPMG's Board Leadership Center recommends that proxy statements explicitly address technology risk governance structures. Proxy statements that reflect active engagement with technology risk are better positioned against activist pressure and regulatory scrutiny than those that treat it as an afterthought.