The accounting close used to be a sprint you ran twice a year and survived. Now it's a recurring, compressed, high-stakes process that feeds directly into SEC filings and the margin for error is smaller than it's ever been.

According to a Deloitte CFO Signals survey, more than 70% of finance leaders cited the accounting close as their top process improvement priority (Deloitte, 2024). Most finance teams are still running this cycle the same way they did a decade ago: spreadsheets stitched together with macros, manual reconciliations that take two days, disclosure language drafted from scratch, and a final review week that stretches every resource.

AI in accounting is changing each of those steps not by replacing the accountants running them, but by collapsing the time and error rate of the work that doesn't require a CPA's judgment. This guide covers exactly how AI is being applied across the accounting close and SEC reporting workflow in 2026, what the practical limitations are, and where purpose-built platforms fit into the process.

This guide covers which close and reporting tasks AI handles reliably, what a modern AI-assisted close checklist looks like, and how specific AI modules map to workflow steps.

What Does "AI in Accounting" Actually Mean for the Close Cycle?

AI in accounting applies machine learning, natural language processing, and large language models to automate or augment tasks in the financial close and reporting process. Unlike rule-based robotic process automation, AI systems learn from data, adapt to variation, and handle unstructured inputs like contract language and SEC comment letters across four close phases: reconciliation, management reporting, financial statement preparation, and filing review.

AI in accounting refers to the application of machine learning, natural language processing, and large language models to automate, accelerate, or augment tasks in the financial close and reporting process. In practice this means: AI that reconciles accounts by pattern-matching against prior periods, AI that drafts or benchmarks disclosure language against peer filings, and AI that surfaces anomalies in journal entries before a human reviewer sees them.

This is not robotic process automation (RPA) rebranded. RPA follows fixed rules — it executes a predetermined sequence of steps and fails when anything changes. AI systems learn from data, adapt to variation, and can handle unstructured inputs like contract language, earnings call transcripts, or SEC comment letters. A Gartner research report projected that by 2026, 50% of organizations will have adopted AI in their financial close processes, up from fewer than 10% in 2022 (Gartner, 2023). The practical difference for a close cycle: RPA can auto-populate a cell if the format is identical every month; AI can identify that a lease obligation in a draft 10-K doesn't match the ASC 842 (Accounting Standards Codification Leases) treatment in the prior-year filing, even when the language changed.

The close cycle typically has four phases where AI is now being applied: subledger close and reconciliation, management reporting, financial statement preparation, and SEC filing and review. Each phase has different AI readiness some tasks are well-suited to automation today; others require human judgment that AI tools can inform but not replace.

Which Accounting Close Tasks Is AI Changing Most?

The five accounting close tasks most transformed by AI in 2026 are account reconciliation, journal entry review, variance analysis, intercompany elimination, and disclosure roll-forwards. These tasks share common traits: high volume, rule-governed logic, and historically manual execution. AI compresses reconciliation from four to five days down to one to two days and enables full-population journal entry scanning rather than sampling.

The accounting close tasks with the highest AI impact in 2026 are those that are high-volume, rule-governed, and historically manual. They fall into five categories.

Account reconciliation is where AI has penetrated most deeply. AI-powered reconciliation tools match transactions against expected patterns from prior periods, flag exceptions that fall outside tolerance thresholds, and auto-certify accounts where no exceptions exist. For a team managing 400+ accounts per close, this compresses the reconciliation phase from 4–5 days to 1–2 days the remaining time is human review of the flagged exceptions, not the matching itself.

Journal entry review is the second highest-impact area. AI can scan the full population of journal entries not a sample and flag entries that are statistically anomalous: unusual dollar amounts, unusual posting times, unusual account combinations, or entries that lack supporting documentation. This is both a close efficiency improvement and an internal controls strengthening. PCAOB Auditing Standard AS 2401 (Consideration of Fraud) increasingly expects controls that cover the full journal entry population, not just sampled items. As PCAOB Chair Erica Williams has emphasized, technology-enabled audit procedures, including full-population testing, represent a significant step forward for audit quality.

Variance analysis the process of explaining period-over-period changes in account balances can be partially automated by AI. Natural language generation tools can produce first-draft variance commentary by comparing current-period balances against prior periods and budget, then generating plain-language explanations for significant changes. The controller still reviews and approves; the AI eliminates the blank-page problem for the first draft.

Intercompany elimination for companies with multiple entities is time-consuming and error-prone manually. AI tools that read subledger data across entities can identify mismatches, suggest elimination entries, and flag situations where intercompany balances don't agree catching issues in the close rather than during audit.

Disclosure roll-forwards updating the prior-year's disclosure language for the current filing period are among the most time-consuming tasks in SEC reporting. AI tools like Finrep automate the structural comparison of prior-year to current-year draft, identifying sections that need updating, benchmarking language against what peers filed for the same period, and generating cited first-draft language grounded in EDGAR data.

How Does AI Change the SEC Reporting Workflow Specifically?

AI transforms SEC reporting in three phases: pre-draft research, where peer benchmarking and comment letter analysis drop from two to four days to under an hour per topic; first-draft generation, where disclosure language is grounded in current EDGAR peer data with citation links; and post-draft review, where AI flags divergences from peer practice and cross-reference errors before the filing team review.

AI changes the SEC reporting workflow in three specific phases: research and benchmarking before drafting, first-draft generation, and post-draft review.

Pre-draft research has historically consumed the most time. Before writing a disclosure, a reporting manager needs to know how comparable peers are handling the same topic what language is current, what the SEC has been flagging in comment letters on this topic, and whether a new accounting standard (like ASC 842 for leases, or new climate disclosure guidance) has changed practice since the prior filing. Manually, this is 2–4 days of EDGAR search and review per disclosure topic. With an AI tool like Finrep, it's 30–45 minutes because Fina, Finrep's AI assistant, queries EDGAR natively, applies semantic search to surface relevant peer filings, and returns section-level extracts with citation links back to the source.

First-draft generation is where AI has moved from experimental to operational for many teams. AI-generated first drafts when grounded in peer EDGAR data rather than the model's training data provide a starting point that reflects current practice and can be cited back to source. This matters for audit. A draft disclosure that says "we benchmarked this language against 10-K, Item 1A, filed February 2026" is auditor-ready. A draft from a general-purpose AI model is not, because there's no verifiable source behind it.

Post-draft review is where AI flags potential issues before the filing team sees them. This includes: identifying language that diverges significantly from peer practice (a potential comment letter trigger), checking that disclosures cross-reference correctly within the filing, and flagging terms that have changed meaning under updated SEC guidance. The SEC's Division of Corporation Finance has not restricted AI use in the drafting process the certification requirement on the final filing is unchanged, and AI tools that improve the quality of the research and drafting behind that certification are consistent with it.

The combined effect: teams using Finrep's AI-powered financial disclosure intelligence platform report their 10-K preparation cycle compressing from 10 days to 3–4 days, with 60–70% fewer review loops with auditors.

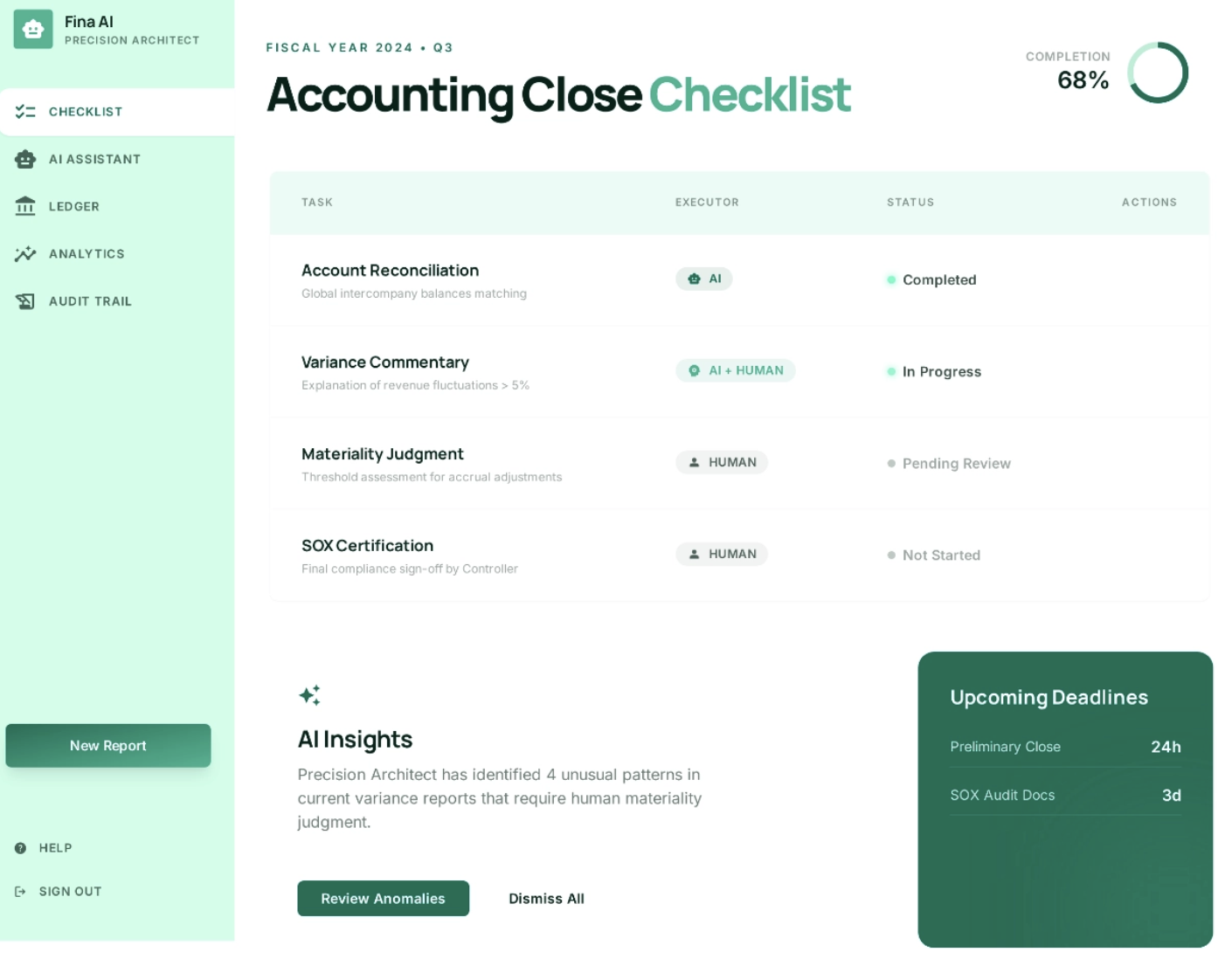

What Is an AI-Assisted Close Checklist and What Should It Include?

An AI-assisted close checklist is a structured sequence of close tasks assigned to either AI execution, AI-assisted human review, or human-only judgment with AI tools handling the retrieval, pattern-matching, and first-draft layers, and human accountants handling the materiality, policy, and certification layers.

Here is a practical checklist structure for a team running a quarterly or annual close cycle with AI tools integrated:

Phase 1: Subledger and Account Close

- AI reconciliation tool runs full-population transaction matching against prior-period patterns

- Exceptions above materiality threshold flagged for human review

- Accounts with zero exceptions auto-certified by AI; controller spot-checks 10%

- Intercompany balance mismatches identified by AI; finance team resolves discrepancies

-Journal entry population scanned by AI for anomalies; flagged items reviewed by controller

Phase 2: Management Reporting

- AI generates first-draft variance commentary for all significant account movements

- FP&A team reviews, edits, and approves variance commentary

- AI cross-checks management report figures against subledger closes

- Dashboard (Finrep) updated with close status and open items

Phase 3: Financial Statement Preparation

- AI performs structural roll-forward: prior-year financial statements updated for current period

- AI flags disclosure sections requiring substantive update (new standard adoptions, material events)

- Fina runs EDGAR peer benchmark for each updated disclosure section

- Benchmark outputs reviewed by reporting manager; material deviations from peer practice documented

- First-draft disclosure language generated by Fina, citation-linked to EDGAR source filings

- Technical accounting team reviews all AI-generated draft language

- ASC standard compliance checked against the FASB Accounting Standards Codification

Phase 4: SEC Filing and Review

- AI cross-references all internal cross-references in the filing draft

- Fina scans EDGAR comment letter history for the company and peers on flagged disclosure topics

- Legal and accounting review of complete draft - human judgment only, no AI substitution

- Officer certifications (SOX 302 and 906) completed - human-only step

- EDGAR filing submitted; Finrep Dashboard updated to reflect filing status

How Does Ask Fina Fit Into the Accounting Close and Reporting Workflow?

Ask Fina is a natural-language AI interface for EDGAR research and disclosure benchmarking, used at three points in the close workflow: before drafting to benchmark peer disclosure practice, after receiving an SEC comment letter to find peer response precedents, and during review to identify divergences from current disclosure norms. It replaces two to four hours of manual EDGAR search with a 10 to 15 minute interaction.

Ask Fina is Finrep's natural-language AI interface for SEC filing research and disclosure benchmarking. You ask a question the way you'd ask a colleague "How are enterprise software companies disclosing AI-related risks in Item 1A right now?" and Fina searches EDGAR natively, surfaces the most relevant peer filings by recency and company profile, extracts the specific section, and returns structured output with citation links.

In the close and reporting workflow, Fina is most useful at three specific moments.

Before drafting a disclosure section: Ask Fina to benchmark current peer practice on the topic what language are direct peers using, what's changed since the prior filing period, and has the SEC been issuing comment letters on this area. This replaces 2–4 hours of manual EDGAR search with a 10–15 minute interaction.

When you receive an SEC comment letter: Ask Fina to find peer responses to similar comments. Comment letter correspondence is public in EDGAR and Fina can surface relevant peer response letters on the same accounting topic within minutes. Deloitte's 2025 roadmap to SEC comment letter considerations identifies MD&A, segment reporting, and EPS as the three most frequently commented-on areas Fina can run targeted searches across all three simultaneously.

When reviewing a draft for peer alignment: Paste a disclosure section into Fina and ask it to identify how your language compares to current peer practice on the same topic. Fina surfaces divergences sections where your disclosure is materially shorter, uses different terminology, or omits elements that have become standard in the peer set. This is both a quality check and early preparation for the conversation your auditors will want to have.

What Are the Limits of AI in Accounting and What Must Stay Human?

AI cannot determine materiality, make accounting policy elections, or sign SOX certifications. These tasks require human judgment grounded in company-specific facts, industry context, and officer accountability under Sarbanes-Oxley Sections 302 and 906. General-purpose AI tools also carry hallucination risk, producing plausible but incorrect accounting guidance that can compromise filings if used without independent verification.

AI in accounting is not a replacement for accounting judgment. The limits are real and matter for compliance.

AI cannot determine materiality. Materiality under GAAP (Generally Accepted Accounting Principles) is a judgment — one that depends on the specific facts and circumstances of your company, your industry, your investor base, and the current enforcement climate. The SEC's Staff Accounting Bulletin No. 99 provides guidance on materiality assessments that requires qualitative and quantitative analysis. AI can surface that a disclosure treatment differs from peer practice; it cannot determine whether that difference is material to your investors.

AI cannot make accounting policy elections. When you adopt a new ASC standard, the election you make full retrospective vs. modified retrospective transition, for example is a policy decision with multi-period implications. AI tools can surface how peers made the same election and what the disclosure looked like; the election itself requires your technical accounting team.

AI cannot sign SOX certifications. The Sarbanes-Oxley Act Section 302 and 906 certifications require a named officer to attest to the accuracy and completeness of the filing. No AI tool can substitute for that accountability. The SEC has not indicated any willingness to modify this requirement. According to SEC enforcement data, SOX certification violations remain among the most frequently charged offenses in financial fraud cases (SEC, 2023).

AI hallucination risk in unverified outputs. General-purpose AI tools ChatGPT, Gemini, others can and do generate plausible-sounding accounting guidance that is factually wrong. The risk in a close or reporting context is significant: a team member who asks a general AI "what's the ASC 606 treatment for this contract modification" may get a confident, detailed, incorrect answer. The FASB Accounting Standards Codification is the authoritative source for all accounting policy questions. Purpose-built tools like Finrep avoid this by grounding every output in EDGAR source documents rather than model training data.

PCAOB audit implications. Your external auditors are increasingly asking about AI tool usage in the close and reporting process. The PCAOB's current auditing standards do not prohibit AI use in the preparation process but auditors expect to be able to trace every disclosure back to a verifiable source. Finrep's citation-linked outputs are designed to satisfy this requirement. Black-box AI outputs are not.

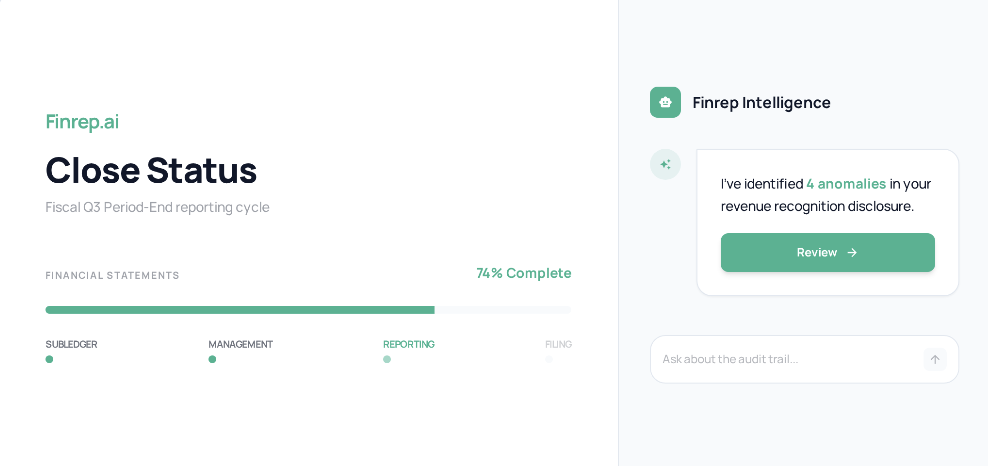

How Does Finrep's Dashboard Connect the Close Cycle to SEC Reporting?

Finrep's Dashboard is the operational view that connects the accounting close to the SEC filing giving the Controller, VP of Finance, and SEC reporting manager a single place to track close task status, filing readiness, and open items across the cycle.

The Dashboard surfaces three things that typically require multiple systems and manual status calls to assemble: which close tasks are complete vs. open, which disclosure sections have been benchmarked and drafted, and which filing deadlines are approaching with unresolved items.

For teams running quarterly 10-Q cycles and annual 10-K cycles simultaneously, the Dashboard's value is in preventing the things that fall through the section that was rolled forward but never benchmarked, or the comment letter response that was drafted but never reviewed before the deadline.

The practical outcome: reporting teams using Finrep's Dashboard report meaningful reductions in the status-meeting overhead that typically consumes 20–30% of the close manager's time because the status is visible in the tool rather than assembled from email chains and spreadsheets before each call.

Is AI-Assisted Financial Reporting Acceptable to Auditors and the SEC?

Yes, with the right tool design. The SEC has not prohibited AI use in the financial reporting process. The accountability framework is unchanged: management certifies the accuracy of every filing, and AI tools that improve the quality of the research and drafting behind that certification are consistent with the requirement.

The distinction that matters to auditors is between outputs that are traceable and outputs that are not. A Fina-generated disclosure benchmark that links to ten EDGAR source filings is traceable an auditor can click through, read the sources, and verify the benchmark independently. A ChatGPT output that says "here's how SaaS companies typically disclose AI risk" with no source citations is not traceable and not audit-ready.

The Harvard Law School Forum on Corporate Governance has covered the evolving relationship between AI tools and financial disclosure quality in multiple recent articles it remains the best independent resource for following regulatory and governance developments on this topic.

Companies including ON Semiconductor and Hewlett Packard Enterprise have publicly disclosed AI use in their financial reporting workflows. The practice is increasingly standard at companies at the forefront of reporting efficiency. The differentiator is not whether AI is used it's whether the AI tool produces verifiable, sourced outputs that hold up to auditor scrutiny.

For reference on how AI tools fit within the broader internal controls framework, the Center for Audit Quality's research on audit quality and disclosure practices provides practical guidance for audit committees and controllers considering AI adoption.

Frequently Asked Questions: AI in Accounting and the Close Cycle

What is AI in accounting and how does it differ from traditional accounting automation?

AI in accounting refers to the use of machine learning, NLP, and large language models to automate or augment accounting close and reporting tasks. Unlike traditional automation (RPA), which follows fixed rules and breaks when inputs vary, AI systems learn from data, handle unstructured inputs, and adapt to variation making them suitable for tasks like journal entry anomaly detection, disclosure benchmarking, and variance commentary generation that rule-based tools cannot handle.

Which tasks in the accounting close can AI handle today?

AI is most reliably applied to: account reconciliation (full-population matching against prior-period patterns), journal entry anomaly detection, variance commentary first drafts, intercompany elimination mismatch identification, and disclosure roll-forwards. Tasks requiring materiality judgment, accounting policy elections, and officer certifications remain human-only.

How does AI improve the SEC reporting workflow?

AI shortens the pre-draft research phase (peer benchmarking, comment letter analysis) from 2–4 days to under an hour per topic. It enables first-draft disclosure generation grounded in current EDGAR peer data with citation links that make outputs audit-ready. Post-draft, AI can flag divergences from peer practice before the filing team's review. Finrep's EDGAR-native AI reduces the full 10-K preparation cycle from 10 days to 3–4 days.

Can AI-generated content be used in SEC filings?

Yes, when the AI tool links outputs to verifiable EDGAR sources. The SEC requires that all disclosures be accurate and that management certify the filing. AI tools that generate disclosure language grounded in public peer filings are audit-ready. General-purpose AI tools that generate language from training data without source citations are not appropriate for use in final filings without independent verification.

What is Ask Fina and how does it fit into the close workflow?

Fina is Finrep's natural-language AI interface for EDGAR research and disclosure benchmarking. You ask Fina a question in plain language "benchmark our revenue recognition disclosure against direct peers" and Fina searches EDGAR natively, returns relevant peer filing extracts, and links every output to its EDGAR source. In the close workflow, Fina is used before drafting to benchmark peer practice, after receiving an SEC comment letter to find peer response precedents, and during review to identify divergences from current disclosure norms.

How does Finrep's Dashboard support the accounting close?

Finrep's Dashboard gives controllers and SEC reporting managers a single view of close cycle status from subledger reconciliation through filing readiness. It surfaces which tasks are open, which disclosure sections are drafted and reviewed, and which deadlines are approaching with unresolved items. Teams using the Dashboard report meaningful reductions in manual status tracking overhead.

Key Takeaways

- AI in accounting is changing the close cycle at the retrieval and pattern-matching layer not the judgment layer. Reconciliation, journal entry review, variance commentary, and disclosure benchmarking are the highest-impact areas today.

- The SEC reporting workflow benefits most from AI in three phases: pre-draft research (peer benchmarking), first-draft generation (grounded in EDGAR), and post-draft review (divergence flagging).

- For audit-readiness, AI tools must link outputs to verifiable source documents. General-purpose AI tools cannot satisfy this requirement. Purpose-built platforms like Finrep can.

- The AI-assisted close checklist separates tasks into three lanes: AI execution, AI-assisted human review, and human-only judgment. SOX certifications and materiality determinations always belong in the human-only lane.

- Ask Fina Finrep's EDGAR-native AI replaces 2–4 hours of manual peer research with a 10–15 minute interaction, with citation-linked outputs your auditors can verify independently.

- Teams using Finrep report 10-K preparation cycles compressing from 10 days to 3–4 days, with 60–70% fewer auditor review loops.

Finrep is an AI-powered financial disclosure intelligence platform for the Office of the CFO SOC 2 Type II and ISO 27001 certified, zero data residency, backed by Accel. Clients include FOX, Roku, HP, RingCentral, Infosys, and Sixt.

Request access to Finrep the Finrep platform](https://www.finrep.ai/request-access).